Engineering the Industrial Intelligence Revolution: A Comprehensive Roadmap for Factory-Ready AI Transformation in 2026

The contemporary industrial landscape is characterized by a profound and unsettling paradox. While global investment in artificial intelligence has surged to record heights, with American enterprises alone funneling between $35 billion and $40 billion into generative AI initiatives by 2025, the actual yield on these investments remains strikingly low. Research indicates that a staggering 95% of industrial AI pilots fail to transition into scaled production or deliver any measurable impact on the profit and loss statement. This discrepancy suggests that the "Smart Factory" is currently more of a mirage than a functional reality for the vast majority of manufacturers. The failure is not a result of insufficient computational power or the lack of sophisticated algorithms; rather, it is the symptom of a systemic neglect of the fundamental "plumbing" required to sustain intelligent systems. In 2026, the competitive divide is defined by the ability to solve for connectivity, context, and quality.

Introduction: The Mirage of the Smart Factory

The current state of industrial AI is defined by what researchers have termed the GenAI Divide. On one side of this chasm are AI-native companies that have successfully integrated large-scale models into their core workflows; on the other are traditional enterprises that remain trapped in "pilot purgatory." The latter group represents the 95% of organizations whose AI programs stall out after the initial demo phase. These failed experiments are often described as "science projects"—impressive in a controlled environment but structurally incapable of adapting to the messy, real-world chaos of a production floor.

The Statistics of Stagnation

The magnitude of the waste in the current AI cycle is unprecedented. Global spending on enterprise AI reached $644 billion in 2025, yet the attrition rate for these projects is alarming. Analysis from McKinsey suggests that 42% of companies abandoned the majority of their AI initiatives in 2025, a significant increase from 17% in the previous year. This trend indicates a growing disillusionment as the initial hype meets the reality of industrial data constraints.

The "dirty secret" of the modern factory is that it is not suffering from a lack of intelligence but from an overwhelming accumulation of data debt. This represents the implied cost of future rework that accumulates when organizations opt for quick-fix, point-to-point integrations instead of building a sustainable data foundation. This debt manifests in five critical forms: integration, data, process, application, and security debt. When an organization attempts to layer AI on top of this unstable environment, the resulting system becomes brittle. In fact, most current generative AI systems do not retain feedback, adapt to context, or improve over time, making them static snapshots of a dynamic reality.

The Thesis of Foundation

The central thesis for industrial leaders in 2026 is that AI success is a byproduct of a robust data foundation. AI is the "last mile" of a journey that begins with connectivity and ends with contextualized, high-quality data. Without addressing the underlying architecture, AI initiatives are merely expensive experiments that lack the memory and adaptability required for mission-critical work. The transition from reactive firefighting to agentic autonomy depends entirely on the transition from fragmented silos to a unified data fabric.

The Legacy Trap: Why Brownfield Factories Are Breaking AI

The majority of the world's manufacturing capacity resides in "brownfield" facilities—factories that have been operational for decades. These environments are characterized by a high average age of fixed assets, currently recorded at 24 years, the oldest since the mid-20th century. These machines, while physically durable, were never designed to be cloud-connected nodes in an intelligent network. This creates a "Legacy Trap" where the existing infrastructure actively resists AI transformation.

The Protocol Tower of Babel

The most immediate barrier in brownfield environments is the lack of a common language. A single factory floor might contain Programmable Logic Controllers (PLCs) from five different decades and ten different vendors, each communicating through proprietary protocols. This creates a "Protocol Tower of Babel" where extracting data requires custom, brittle integrations that cost upwards of $300,000 per year per system to maintain.

The traditional model for managing these systems—the

—further complicates matters. In this model, data must travel sequentially from the sensor to the PLC, then to the SCADA system, the MES, and finally the ERP. This rigid hierarchy creates significant latency and strips data of its real-time value. By the time information reaches an AI model at the top of the stack, it is no longer actionable for real-time optimization.

The Context Crisis

Even when data can be extracted from legacy systems, it is often fundamentally useless to an AI model because it lacks context. This is known as the "Context Crisis." A raw sensor reading such as "Pressure: 42" is just a number. For an AI to make a meaningful decision, it needs to know the metadata: which machine produced the reading? What was the active batch or order? What were the environmental conditions, such as humidity or ambient temperature, at that exact millisecond?

In legacy environments, this metadata is rarely captured alongside the signal. Instead, it resides in fragmented islands of truth—the shift log might be in a spreadsheet, the maintenance history in a separate CMMS, and the real-time pressure in a local PLC register. This fragmentation starves machine learning models of the situational awareness required for accurate predictions. When models are trained on such context-poor data, they suffer from higher error rates and lower explainability, leading to a "verification tax" where human operators must spend significant time double-checking AI outputs.

Silos of Knowledge and the IT/OT Divide

The historical divide between Information Technology (IT) and Operational Technology (OT) remains one of the most significant cultural and technical barriers to AI implementation. IT teams are focused on security, data governance, and cloud scalability, while OT teams prioritize safety, uptime, and deterministic control. These two worlds operate on different timelines and utilize different standards, resulting in a disconnected nervous system for the factory.

This divide creates a situation where the institutional memory of the plant—the knowledge held by master technicians who have worked on the machines for 30 years—is never digitized. As the "silver tsunami" of retiring experts approaches, with 40% of the manufacturing workforce set to retire by 2030, the failure to integrate these knowledge silos becomes an existential threat. Without a unified system that captures both sensor data and human expertise, the factory is left with a broken link between planning decisions and shop-floor execution.

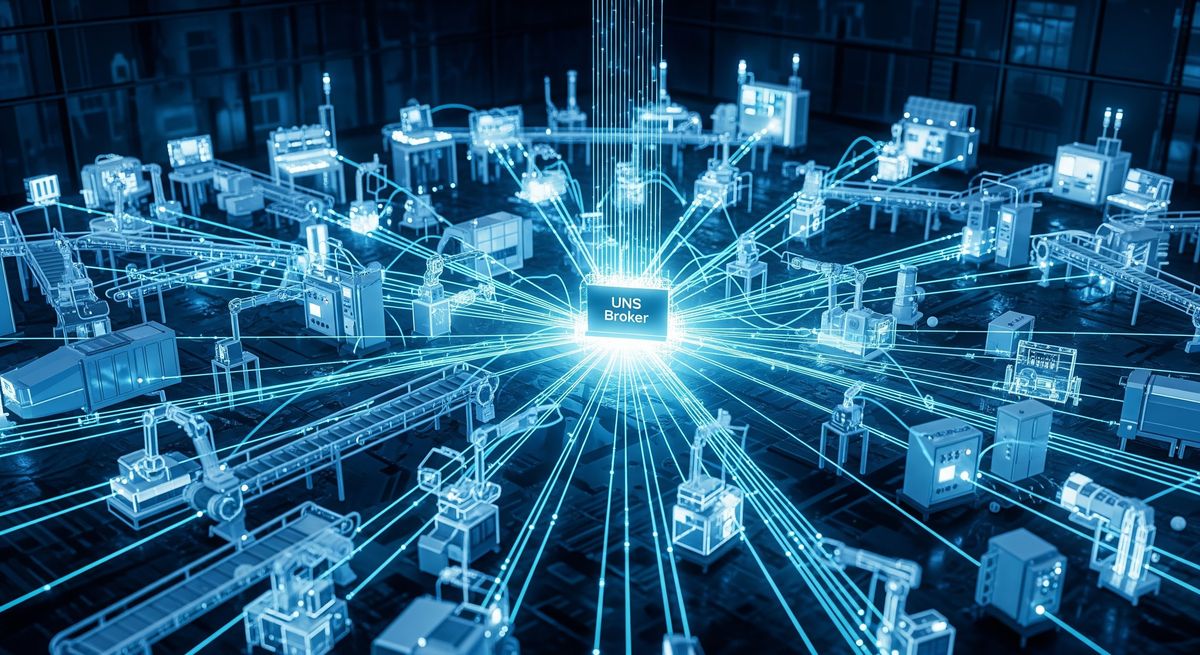

The Unified Namespace (UNS): Creating a Real-Time Nervous System

To move beyond the limitations of the Legacy Trap, manufacturers are increasingly adopting the Unified Namespace (UNS) architecture. The UNS represents a fundamental shift in how industrial data is managed, transitioning from the rigid, hierarchical ISA-95 model to a centralized, event-driven hub that acts as the real-time nervous system of the enterprise.

Transitioning to an Event-Driven Architecture

In a UNS architecture, every component—from the smallest vibration sensor to the corporate ERP—is treated as a node within a vast ecosystem. Instead of passing data through a multi-layered hierarchy, nodes publish their information to a central broker where it is instantly available to any other authorized node via a subscription model.

This "Publish/Subscribe" mechanism offers several advantages over traditional systems:

- Reduced Complexity: It eliminates the need for thousands of point-to-point connections, as every system only needs to connect once to the broker.

- Scalability: New machines or AI applications can be added to the network without disrupting existing workflows.

- Real-Time Accessibility: Data is updated by exception, ensuring the namespace always reflects the current state of the business.

The Unified Namespace (UNS)

The primary objective of a UNS is to create a consolidated environment where every department—from maintenance teams on the floor to the C-suite—sees the same real-time operational data. This eliminates the discrepancy between different reports and ensures that everyone is working from the same uncompromising system map.

In 2026, the UNS is being implemented using a node-based approach where each asset class is defined by a standardized hierarchy. For example, a "Pump" node in the namespace would contain sub-nodes for its status, pressure, temperature, and maintenance history. By standardizing these naming conventions, AI models can instantly understand and correlate events from different production lines without the need for manual mapping.

Semantic Interoperability and Standards

True intelligence requires not just data, but meaning. While basic protocols provide the transport mechanism, standards like Sparkplug B and OPC UA provide the definition.

- Sparkplug B: Standardizes the payload and topic structure of MQTT messages, ensuring that every data point includes essential metadata like timestamps, data types, and quality metrics.

- OPC UA: Focuses on describing the address space—a hierarchical graph of nodes that defines how industrial equipment relates to other data.

The integration of these standards allows for digital continuity from the edge to the cloud. For example, using companion specifications for robotics or machine tools, a manufacturer can define a robot arm as an object with standard attributes such as JointPosition or OperationMode. This allows AI-driven diagnostics to be deployed instantly across different vendor robots because the data is presented in a consistent format.

Operationalizing Trust: Data Quality as a Performance Metric

One of the most significant reasons AI initiatives fail to scale is a lack of trust in the underlying data. "Garbage in, garbage out" is a well-known axiom, but in a manufacturing environment, the cost of "garbage" is quantified in millions of dollars of lost productivity. To succeed in 2026, organizations must treat data quality as a core performance metric.

The Financial Impact of Poor Data Quality

The cost of unplanned downtime is the most direct measure of the failure to operationalize data trust. Research indicates that unplanned equipment downtime costs the average Fortune 500 company $2.8 billion annually, representing approximately 11% of their revenue. When AI models for predictive maintenance or quality control are fed poor data, they produce false positives or false negatives that lead to catastrophic failures.

Furthermore, 31% of maintenance managers reported that downtime costs increased in 2025, primarily driven by inflation on parts and the aging of fixed assets. By operationalizing data trust, companies can achieve up to a 25% reduction in maintenance costs and a 10% to 20% increase in uptime.

To bridge the gap between raw sensor data and AI-ready intelligence, leading manufacturers are adopting medallion architecture pipelines. This architecture organizes data into three distinct layers, with each layer progressively refining the data for specific use cases.

- Bronze Layer (Raw): This layer stores data in its most basic form, exactly as it arrived from the source systems. It serves as an immutable historical archive that ensures data lineage is always preserved.

- Silver Layer (Enriched): Here, the data is cleaned, deduplicated, and conformed to the enterprise standard. Missing values are filled, units are standardized, and asset hierarchies are applied. This layer is ideal for ad-hoc reporting and basic analytics.

- Gold Layer (Curated): The final layer consists of consumption-ready datasets optimized for machine learning models and high-level KPIs. Data is aggregated and modeled into specific features that drive autonomous decision-making.

This multi-hop architecture ensures that AI algorithms operate on trusted data, minimizing the risk of model drift or errors caused by corrupted inputs.

The Governance Guardrail

In 2026, data governance management has shifted from a passive compliance checklist to an active, automated control layer. This shift is necessary because AI models can be "confidently wrong," and the lack of a governance framework often leads to shadow AI where employees use independent tools that are not integrated with corporate data policies.

Effective governance in a smart factory involves:

- Automated Data Observability: Continuously monitoring the health of the data pipeline to detect anomalies or quality drops before they affect production.

- Lineage Tracking: The ability to trace every AI decision back to its source, which is critical for model explainability and regulatory compliance.

- Human-in-the-Loop (HITL): Implementing guardrails where high-risk actions—such as adjusting chemical mixtures—require human verification.

Conclusion: From Reactive Firefighting to Agentic AI Autonomy

As we move into the second half of the decade, the competitive landscape of manufacturing is being redrawn. The divide is no longer between those who use AI and those who do not, but between those who have built an industrial data fabric and those who are still struggling with "data spaghetti." The transition from predictive dashboards to autonomous agents represents the final step in this transformation.

The 2026 Competitive Divide

The shift describes the transition from systems that merely notify humans of problems to systems that can autonomously solve those problems. In 2026, top performers are deploying agents for autonomous production scheduling, supply chain orchestration, and real-time root cause analysis.

Deloitte predicts a fourfold increase in agentic adoption in manufacturing by 2026. Those who fail to make this transition face an insurmountable gap: as data-ready manufacturers automate their operations, they realize profit margins that are twice as high as their peers

The Roadmap Ahead: Investing in the Industrial Data Fabric

The call to action for leaders in 2026 is clear: stop buying "AI-in-a-box" solutions that promise immediate results but fail to integrate with the reality of the factory floor. Instead, invest in the architecture of connectivity, context, and quality that enables intelligence to scale.

This roadmap involves three key pillars:

- Unify Operational Signals: Transition to a Unified Namespace that eliminates islands of truth and provides a real-time nervous system for the enterprise.

- Liberate Legacy Logic: Extract and modularize the logic hidden in 20-year-old PLCs, making it accessible to modern automation.

- Embed AI into Workflows: Move beyond chatting with documents to building agents that can query databases and execute decisions within Human-in-the-Loop parameters.

Final Vision: The Self-Healing Floor

The ultimate destination is a self-healing and self-optimizing shop floor. In this vision, autonomous agents collaborate to drive efficiency, navigate geopolitical disruptions, and compensate for a shrinking skilled workforce. This is not a future where technology replaces people, but one where technology empowers people to lead with greater clarity and confidence. By solving the "plumbing," manufacturers can finally move from the mirage of the smart factory to the reality of industrial-grade autonomy.

The industrial intelligence revolution is no longer a distant vision; it is an immediate competitive mandate. By 2026, manufacturers that successfully bridge the "GenAI Divide" will not just be predicting failures but autonomously orchestrating repairs and supply chains.Scaling AI from "pilot purgatory" to production requires more than just better models; it demands a fundamental shift toward an adaptive, contextualized data foundation. To transition your factory into the elite 5% of AI high-performers and secure a self-healing operational future while building mistake-proof digital poka-yoke systems, you need a partner capable of engineering the complex plumbing of industrial data. For expert assistance in building your factory-ready AI strategy, consult ATS.