Introduction: Reimagining Business Intelligence in the Age of AI

For more than two decades, the dashboard has been the anchor of the data-driven organization. Born from the spreadsheet, dashboards evolved into sophisticated visualization platforms that, for the first time, allowed business leaders to see their data. Since their early days in the 1970s, where they required specialized analysts to perform complex data extraction and transformation, dashboards have set the baseline expectation for data-driven operations.

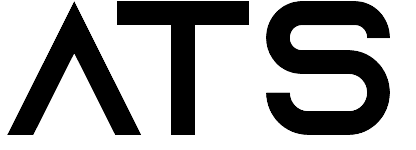

However, this reliance on static visualization has created a critical bottleneck. The very tools meant to provide clarity are now a source of delay and complexity. Business leaders find themselves drowning in data but starving for simple answers. Legacy dashboards are inherently limited—they are static, historical, and can only answer the specific questions they were pre-programmed to address. This rigidity creates analyst backlogs, slows decision-making, and restricts access for non-experts.

This article will analyze the pivotal evolution now underway: the shift from static visualization to generative business intelligence. This new paradigm, which fuses generative AI with business intelligence, redefines how employees and leaders interact with data. By moving from a visual-based to a conversation-based model, Generative BI is finally democratizing data access, accelerating decision-making, and unlocking the real-time insights organizations have long been promised. For further insights on how voice interfaces are changing productivity, see The End of Typing: Why Voice is the New OS for Business Productivity.

From Spreadsheets to Smart Dashboards: The Journey So Far

The history of BI has been a steady march toward visualization. The traditional stack was a centralized, technical function, with specialist teams managing manual reporting. The first major disruption came with Tableau. These tools were revolutionary, offering interactive, drag-and-drop interfaces that raised executive expectations and made "data-driven" a corporate mandate.

Despite this progress, these platforms did not solve the fundamental problem; they merely optimized the creation of static reports. The industry's focus on visualization inadvertently preserved the centralized bottleneck. This created two critical, persistent challenges:

- Analyst Overload: While platforms like Tableau and Power BI are powerful, they are still complex tools designed for specialists. Any ad-hoc question or new query from a business user—the "last mile" of insight delivery—requires filing a ticket and waiting for an analyst to build or modify a report. This dynamic is a primary driver of the Death by Dashboard, where users are presented with indigestible, abstract reports packed with data but few answers.

- The "Final Mile" Problem: The result of this bottleneck is a stark gap between investment and impact. For two decades, despite significant spending on BI tools, analytics adoption rates have stagnated at around 30%. A 2024 analysis highlighted that only 10% of executives believe their employees can effectively leverage data for decision-making. The visualization era, for all its advances, failed to solve the final, critical problem: delivering a specific answer to a non-technical user at the moment of decision. For a deep dive into AI adoption dynamics, see The AI Leadership Paradox: Why 92% of Companies Are Increasing AI Investment, But Only 1% Achieve True Integration

Generative BI: Core Capabilities and Transformative Benefits

Generative BI represents a fundamental paradigm shift. It replaces the complex drag-and-drop interface with the most intuitive tool of all: natural language. At its core, Generative BI combines the power of large language models (LLMs) with an organization's business data, allowing any user to simply ask a question and receive an immediate, synthesized answer.

The process works by translating a user's plain-English query (e.g., "Why did our East Coast sales drop in March?") into semantic logic. The AI then automatically generates the necessary code (like SQL) to query multiple data sources (such as a CRM or ERP), retrieves the relevant data, and, most importantly, generates a comprehensive narrative—complete with charts, summaries, and predictive "what if" scenarios—to answer the question in seconds.

This capability unlocks transformative benefits across the organization, particularly for the marketing and business leaders who are the target audience of this report:

- For Marketing Professionals: Instead of waiting for a post-campaign report, a marketing manager can ask, "How did our Q1 social media campaign compare to last year, and what was the public sentiment from customer comments?". GenBI can analyze ad creative performance, provide actionable summaries to optimize PPC ad spend, and gauge public sentiment in real-time, allowing teams to adjust strategy proactively.

- For Sales Teams: A sales director can bypass the static pipeline dashboard and ask, "Show me all deals in my pipeline over $50k that haven't had customer contact in 10 days". The system can provide real-time alerts and diagnostics on pipeline shifts as they happen, connecting CRM data to behavioral analytics to explain why a trend is occurring.

- For Executive Leadership: An executive can move beyond high-level summaries and query real-time risk exposure by asking the system to analyze market data or even the sentiment in competitors' earnings calls.

This new model drives tangible returns. A 2024 IDC report, sponsored by Microsoft, found that for every $1 invested in generative AI, companies are seeing an average return of $3.70. This is not a distant-future technology; its adoption is an immediate competitive imperative. Over 85% of Fortune 500 companies are already using Microsoft AI solutions, with other reports suggesting nearly all have integrated AI in some capacity. The value is driven by automating complex analysis, accelerating decisions, and finally achieving true data democratization by empowering non-technical users to self-serve.

Pitfalls, Myths, and Responsible AI Deployment

The staggering ROI figures and high-speed adoption have created a "fear of missing out" that drives many organizations to rush implementation. This has led to a harsh reality: Generative BI is not a "silver bullet".

We are now seeing a "GenAI Divide": a widening gap between the few organizations successfully scaling AI and the many stuck in pilot projects. An MIT NANDA report highlighted this divide, finding that 95% of custom generative AI pilots are failing to deliver measurable P&L impact or reach production. The primary reason is not a failure of technology, but a gap between "implementation" (buying a tool) and "adoption" (the workforce's refusal to use a tool it doesn't trust). Scaling AI efforts effectively are explored in Scaling AI in Operations: Managing Change for Sustainable Impact.

Building this operational trust requires navigating three core challenges:

- The Data Quality Crisis: The single greatest obstacle to successful GenBI adoption is poor data quality. Research from Informatica reveals that 42% of data leaders cite data quality as the main hurdle, ahead of privacy (40%) and AI ethics (38%). A Qlik survey echoed this, with 81% of AI professionals admitting their company has significant data quality issues. GenBI is only as good as the data it accesses; fragmented, siloed, and "dirty" data is the root cause of project failure.

- AI "Hallucinations": The biggest threat to user trust is the risk of AI "hallucinations"—outputs that are plausible, confident, and factually wrong. These errors are not random; they are typically caused by the AI querying poor-quality data or by a user providing a vague prompt, forcing the model to "fill in the blanks". A single hallucination delivered to an executive can poison the well and kill adoption across the entire organization.

- Governance and Security: Democratizing data access is also the technology's greatest risk. Without ironclad governance, a GenBI tool could allow a marketing user to query sensitive HR salary data or expose customer PII.

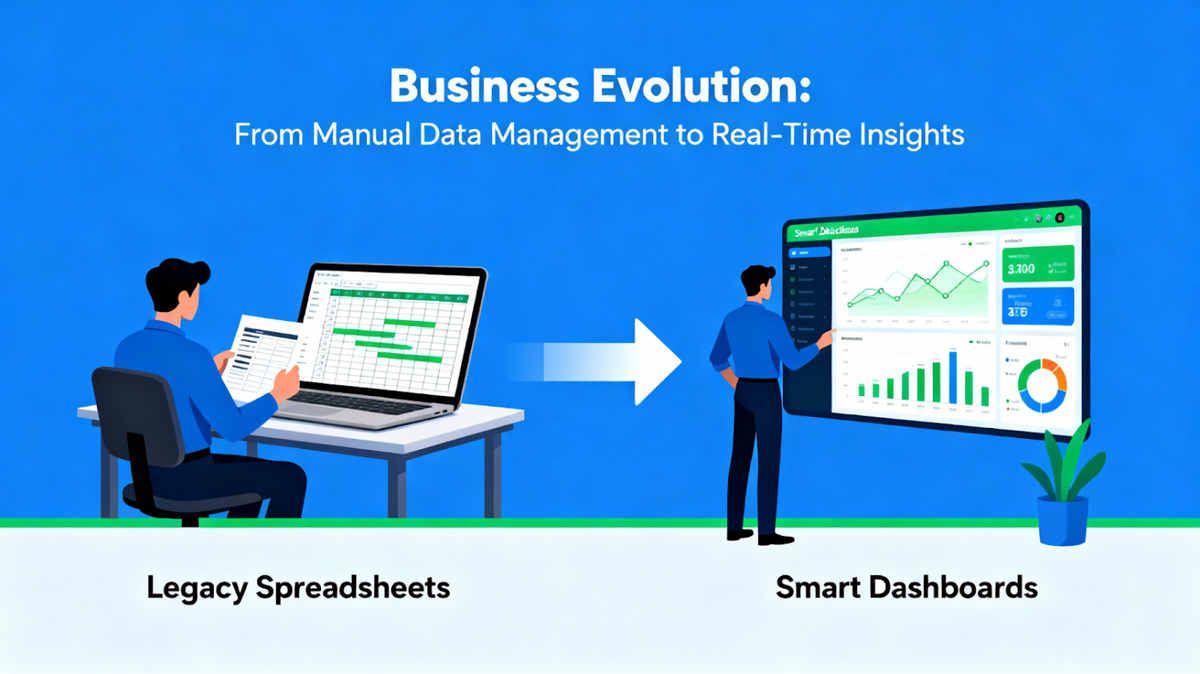

Successfully bridging the GenAI divide depends on building a framework for operational trust. This is not optional; it is the prerequisite for adoption. This framework rests on three pillars:

- Human-in-the-Loop (HITL): This is a non-negotiable oversight model. It means designing human review into the AI system from conception, not tacking it on as an afterthought. Subject matter experts must be involved to monitor outputs and build workforce confidence.

- Explainable AI (XAI) and Traceability: To trust an answer, a user must be able to see its source. A trustworthy GenBI system must provide data lineage—the ability to trace an AI-generated insight back to the specific data points and tables used to create it.

- Automated Governance: Security must be embedded. Role-Based Access Control (RBAC) is the key technical safeguard that enforces a user's permissions at the query level. This ensures data is democratized safely, respecting all internal and external data policies. In regulated industries like healthcare (HIPAA) or finance (FINRA), this level of traceability and governance is a legal and compliance mandate. For a global perspective on trustworthy AI and governance, read The UNESCO Recommendation: A Strategic Blueprint for Trustworthy AI Transformation.

Conclusion: The Future—From Insight Delivery to Everyday AI Partnership

The move to Generative BI is not a final destination; it is an ongoing transformation of the business operating model. The future of business intelligence is "conversational analytics". This vision sees the static, standalone dashboard function evolving into an intelligent, always-on assistant for business strategy.

This "AI assistant," already emerging in tools like Amazon Q and Databricks Genie, will be embedded in the collaboration tools teams already use, like Slack and Microsoft Teams. It will proactively identify trends, automate workflows, and serve as a collaborative partner for strategic planning.

However, the path to this future will be a "pragmatic reset," as Forrester predicts for 2026. The hype will fade, and wary buyers will demand "proof over promises". Success will hinge on tangible outcomes and, above all, trust. The key to navigating this reset may lie in Gartner's prediction that by 2026, 75% of businesses will use generative AI to create synthetic customer data. This approach—training AI on a safe, compliant, synthetic version of enterprise data—offers a practical bridge to solve the quality, privacy, and governance challenges of today.

For marketing professionals and business owners, the call to action is clear. To bridge the gap from dashboards to a truly generative, trusted, and democratized BI, the first investment is not in a large language model. The first investment must be in a foundational data governance and quality strategy. The AI-assisted future is powerful, but it will only be built on a foundation of data that can be trusted.

Navigating this complex transformation from legacy data to an AI-powered enterprise is a significant challenge. It requires a partner who understands both the technology and the strategic imperatives of change management.

If your organization is ready to build that trusted foundation and unlock the true potential of Generative BI, we invite you to learn more about the AI transformation consulting services at Alpha Technical Solutions.