Introduction: The Agentic Shift—Beyond Automation to True Augmentation

For years, the conversation around AI in operations has been dominated by automation—specifically, Robotic Process Automation (RPA). We learned to think of AI as a digital tool for mimicking repetitive, rule-based human tasks faster and more cheaply. But the ground has shifted beneath our feet. Today, a new paradigm is redefining the future of work: agentic AI.

This is not just another upgrade. Agentic systems represent a new category of operational capability. They are autonomous, reasoning "digital colleagues" that can perceive their environment, create multi-step plans, execute complex tasks using a variety of digital tools, and learn from the outcomes. This leap from merely doing to reasoning and acting is reconfiguring how work itself is accomplished.

The excitement is palpable and justified. Nearly 90% of business leaders now consider AI fundamental to their strategy. Yet, a critical misunderstanding persists. The journey from a successful pilot program to enterprise-wide, sustainable impact is not paved with technology alone. It is a journey of profound organizational change. The most significant challenge isn't technical; it's human. This post provides the people-driven playbook for navigating this transformation, moving beyond a narrow Return on Investment (ROI) focus to build lasting organizational resilience, capability, and a true human-AI partnership.

Section 1: Understanding the New Operational Partner: What is Agentic AI?

To manage the change, leaders must first grasp the nature of what is changing. Agentic AI is not an incremental improvement on older automation; it is a categorical leap in capability.

Defining the Paradigm Shift

Agentic AI systems are proactive and goal-driven. Unlike generative AI, which reacts to prompts to create content, or RPA, which follows a rigid script, agentic AI operates with a degree of autonomy to achieve a specified objective. At its core, it uses a Large Language Model (LLM) as a "reasoning engine" or "brain". This allows it to:

- Perceive: Gather and understand information from diverse sources like databases, emails, and user interfaces.

- Reason & Plan: Analyze the information, formulate a multi-step plan to achieve a goal, and select the right tools for each step (e.g., APIs, internal software, vector databases).

- Act: Execute the plan by interacting with other systems, performing tasks, and making context-aware decisions.

- Learn: Refine its approach for future tasks, creating a continuous learning loop.

These characteristics—autonomy, memory, planning, and tool use—transform the system from a passive tool into an active "agent" of the user, capable of orchestrating and completing complex workflows that were previously the exclusive domain of human knowledge workers.

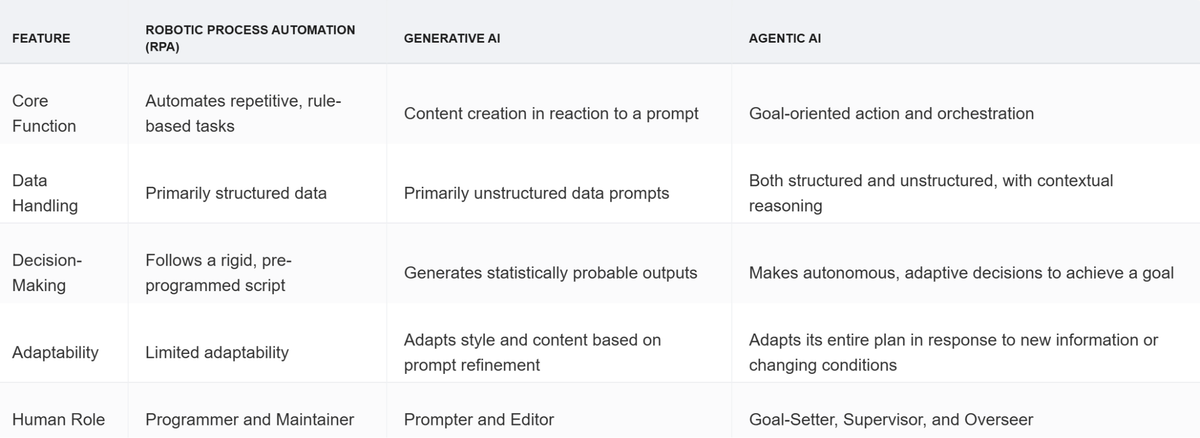

The Operational Evolution: From RPA to Agentic Process Automation (APA)

The distinction between agentic AI and its predecessors is not academic; it is fundamental to understanding the new change management imperative. Agentic Process Automation (APA) represents a new frontier where intelligent agents become strategic partners in business operations. The following table illustrates the magnitude of this evolution.

Comparison: RPA, Generative AI, and Agentic AI

This evolution from a simple tool to a sophisticated partner demands a corresponding evolution in our approach to workforce integration. We train employees on a tool like RPA, teaching them which buttons to press. However, one does not simply "train" a new team member; one "onboards" them. For an agentic AI, this means setting clear goals, defining the context of its work, establishing boundaries and protocols for escalation, and teaching employees how to collaborate with, delegate to, and supervise their new non-human colleague. This is not a matter of software training; it is a fundamental redesign of roles and collaborative norms.

Section 2: Agentic AI in the Wild: From Data Pipelines to Patient Care

The theoretical power of agentic AI is already manifesting in real-world operations. The following case studies, spanning internal expert functions to external client-facing services, reveal how this technology is redefining roles and workflows, and in doing so, highlighting the critical human factors that govern its success.

Case Study 1: Monte Carlo — Augmenting the Expert Data Engineer

Context: Poor data quality is a chronic and costly problem. Data engineers often spend up to 40% of their time reactively firefighting data incidents—diagnosing broken pipelines, correcting inaccuracies, and dealing with the downstream consequences of bad data. This is a significant drain on highly skilled, expensive talent.

The Agentic Solution: Big-data observability firm Monte Carlo has deployed a suite of AI "Observability Agents" to automate the most tedious and time-consuming aspects of data quality management.

- The Monitoring Agent autonomously analyzes data usage patterns to recommend and deploy sophisticated data quality rules and thresholds. This has resulted in a 60% recommendation acceptance rate from human engineers and has increased monitoring deployment efficiency by over 30%.

- The Troubleshooting Agent acts as an automated investigator. When a data quality issue arises, it deploys dozens of sub-agents that work in parallel to explore hundreds of potential causes across the data stack. It can pinpoint the root cause—be it bad source data, a system failure, or a model error—in minutes, a process that often takes human teams hours or even days. Monte Carlo projects this will reduce the average time to resolution by 80%.

This application of agentic AI does not replace the data engineer. Instead, it fundamentally elevates the role. The primary pain point for data engineers is the manual, reactive work of finding and fixing data issues. By automating both the detection (monitoring) and the diagnosis (troubleshooting) of these problems, the agents free the engineer from the constant "firefighting". With this reclaimed time and cognitive bandwidth, the engineer's focus can shift to higher-value, strategic work: designing more robust and resilient data architectures, optimizing pipeline performance for efficiency, and acting as proactive "data stewards" who build quality into the system from the start. The impact is therefore not just about efficiency; it is a redefinition of the data engineering function, transforming it from a reactive technical role into a proactive strategic one.

Case Study 2: NVIDIA & GE Healthcare — Expanding Access and Expertise in Radiology

Context: The healthcare sector faces a dual crisis of rising demand and a critical shortage of specialists, particularly radiologists. The workload for existing radiologists is immense, yet a large portion of it is routine; in some primary care settings, up to 80% of chest X-rays reveal no abnormalities and require no further action.

The Agentic Solution: NVIDIA and GE HealthCare are collaborating to develop autonomous diagnostic imaging systems, using NVIDIA's Isaac for Healthcare platform. This platform allows them to train, test, and validate AI agents in physically accurate virtual environments before deploying them in the real world. The goal is to build autonomy into imaging workflows, from patient positioning to the initial analysis of scans. Similar autonomous AI systems, such as Oxipit's ChestLink, have demonstrated the ability to autonomously analyze and report on healthy patient X-rays with over 99% sensitivity, effectively screening out the high volume of normal cases from the radiologist's worklist.

In expert domains like radiology, the primary value of agentic AI is not replacement, but cognitive offloading and expertise amplification. A radiologist's expertise is a scarce and valuable resource that is currently being inefficiently allocated to high-volume, low-complexity tasks. By automating this "mundane" portion of the workflow, the AI system offloads the cognitive burden from the human expert. This allows the radiologist to dedicate their full cognitive capacity and years of experience to the most challenging, ambiguous, and critical cases where human judgment, pattern recognition, and intuition are irreplaceable. The result is a blended human-AI system that is more efficient, can serve more patients, and is potentially more accurate than either could be alone. The AI handles the volume, while the human expert handles the complexity—a powerful model for scaling expertise, not eliminating it.

Case Study 3: Hippocratic AI — Navigating the Human-Machine Frontier in Patient Care

Context: The potential for AI to alleviate healthcare staffing shortages and reduce costs is enormous. Hippocratic AI has entered this space with a stark value proposition: an "agentic nurse" for as little as $9 per hour, compared to a human nurse at $90 per hour.

The Agentic Solution: Hippocratic AI provides voice-based, generative AI agents designed to be empathetic and responsive. These agents conduct non-diagnostic tasks like post-discharge follow-up calls, medication reminders, and chronic care management. To embed clinical wisdom, the company involves licensed clinicians in the design and testing of the agents, a crucial step toward safety and efficacy.

The Human Challenge: This case study serves as a critical pivot, revealing the immense friction that arises when autonomous agents interact directly with vulnerable people in high-stakes environments.

- Trust and Empathy: The core challenge is trust. While some research suggests patients may be more honest with an AI due to a perceived lack of judgment, other studies show a deep-seated fear of AI's "coldness" and inability to comprehend the emotional dimensions of care. This skepticism is widespread: 88% of physicians and 63% of patients worry about AI delivering inaccurate health guidance.

- Accountability and Ethics: The question of liability is paramount. If an autonomous agent makes a mistake, who is responsible—the developer, the hospital, or the supervising clinician? The American Nurses Association (ANA) is unequivocal: AI is an adjunct to, not a replacement for, nursing judgment, and the human nurse remains accountable for patient care. This creates a paradox. If a human nurse must "babysit" the AI to ensure safety and provide oversight, the promised 10x cost savings become illusory.

- The ROI Illusion: The simple $9 vs. $90 comparison is dangerously misleading. It ignores the hidden costs of necessary human oversight, the immense reputational risk of trust erosion, and the ethical imperative to maintain the human connection that is central to healing.

The technology to create conversational health agents exists and is rapidly improving. The business case, on paper, appears overwhelmingly positive. However, successful adoption hinges entirely on acceptance by two groups: patients and clinicians. Both have valid, deeply held concerns about accuracy, privacy, accountability, and the loss of the human element. Unlike an internal data pipeline where failure causes operational disruption, failure in a patient-facing context can cause direct human harm and irrevocably damage an institution's reputation. Therefore, the scaling of client-facing agentic AI is governed not by technical feasibility or a simplistic ROI calculation, but by the speed of trust. The limiting factor is not how quickly the agents can be built, but how quickly trust can be earned with all stakeholders. This requires radical transparency, robust governance, clear accountability, and a change management process that prioritizes safety and ethical alignment above all else.

Section 3: The People-Driven Playbook for Scaling AI Transformation

The journey from a promising AI pilot to an enterprise-wide capability is where most initiatives falter. Success requires a deliberate shift in mindset—from managing a technology project to leading an organizational transformation.

The Pilot Purgatory: Why AI Initiatives Stall

Many organizations celebrate successful AI pilots that demonstrate clear value, only to see them languish in "pilot purgatory," never achieving scale. The reason is that pilots often operate in a controlled vacuum, shielded from the messy realities of the broader organization. When the time comes to scale, the initiative collides with entrenched workflows, legacy systems, cultural resistance, and a workforce that fears being replaced. The failure is not one of technology, but of change management.

Crafting a North Star: A Human-Centric Change Vision

To escape this trap, leaders must adopt a new framework for change, such as McKinsey's, which focuses on outcomes rather than tools. This is not about deploying agentic AI; it's about architecting a future state of human-AI collaboration.

- Define Outcomes, Not Tools: The vision must be inspiring and human-centric. Instead of "We will deploy AI agents in our call center," the North Star should be, "We will empower our customer service professionals to become true client advocates by equipping them with AI partners that instantly handle all research, documentation, and administrative tasks, freeing them to focus 100% on solving complex customer problems."

- Reimagine Workflows, Not Just Tasks: True transformation requires rethinking end-to-end processes. Leaders must map how teams of humans and "swarms" of AI agents will work together in a blended workforce. This strategic exercise involves identifying which functions are suited for high automation—potentially becoming "Minimum Viable Organizations" (MVOs) where agents handle most work with light human oversight—and which high-touch functions will remain human-led and AI-augmented.

- Build a Foundation of Trust and Governance: This is the bedrock of scaling. Without it, the entire structure will collapse.

- Meaningful Human Oversight: This is a non-negotiable ethical and practical requirement. Organizations must implement a clear oversight model based on the context and risk of the AI's tasks. These models include: "human-in-the-loop," where a person is involved in every decision; "human-on-the-loop," where a person monitors the AI and can intervene when necessary; and "human-in-command," which provides strategic oversight and the ultimate authority to override or shut down the system.

- Transparency and Explainability (XAI): For human supervisors to trust and effectively oversee AI agents, they must be able to understand why an agent made a particular recommendation or took a specific action. Opaque "black box" systems erode trust and make accountability impossible.

From Resistance to Resilience: Engaging the Workforce in Co-Creation

The traditional, top-down model of change management—where leaders decide and employees comply—is obsolete for a transformation as profound as AI integration. It must be replaced with a collaborative, "middle-out" approach that turns employees into active participants.

- Acknowledge and Address the Fear: The single greatest barrier to adoption is fear—fear of job displacement, fear of losing autonomy, and fear of the unknown. This must be confronted with direct, transparent, and continuous communication.

- Communicate the "Why" and the "What's In It For Me": The business case for AI must be clear, but it needs to be framed in terms of augmentation, not just automation. Leaders must articulate how AI will eliminate tedious, repetitive, and low-value tasks, freeing employees to focus on more creative, strategic, and engaging work that leverages their uniquely human skills.

- Identify and Empower Champions: In every organization, there are enthusiastic early adopters. These individuals should be identified, trained, and empowered as AI "superusers" and change champions. They become the most credible advocates, demonstrating the benefits to their peers, providing authentic feedback to leadership, and helping to build momentum from the ground up.

- Involve Employees in the Redesign: The most powerful strategy to overcome resistance is to make employees co-creators of their own future workflows. The employees performing a job are the world's foremost experts on that job's inefficiencies and pain points. By involving them in workshops to map their current processes and identify opportunities for AI augmentation, organizations foster a deep sense of ownership. This transforms the dynamic from a change being done to them to a change being designed by them. This "middle-out" approach, where innovation bubbles up from the teams themselves under the guidance of a strategic North Star, is more agile, generates superior solutions, and builds buy-in organically.

Section 4: Building the Workforce of Tomorrow: The Reskilling and Upskilling Imperative

Scaling agentic AI is not about phasing people out; it's about skilling them up. The long-term success of any AI transformation depends on a deliberate and sustained investment in developing the human capabilities required to work alongside intelligent machines.

Redefining Roles, Not Eliminating People

The dominant narrative of AI-driven mass unemployment is overly simplistic. Evidence from early AI implementations suggests that job reorganization is far more prevalent than outright job displacement, with automation often leading to a reduction in tedious work and an increase in worker engagement. As Amazon Web Services CEO Matt Garman sharply noted, laying off junior employees to replace them with AI is "one of the dumbest things" a company can do. These employees are often the most adept at adopting new AI tools and represent the organization's future talent pipeline. The strategic goal must be augmentation, not replacement.

A Practical Roadmap for AI Readiness

Building an AI-ready workforce requires a structured approach that moves beyond ad-hoc training to create a culture of continuous learning.

- Conduct a Skills Inventory & Needs Assessment: The first step is to create a clear picture of the starting point. Organizations must map the current skills of their workforce and conduct a gap analysis against the future competencies required in a human-AI collaborative environment.

- Define the New "Human" Skillset: As AI handles more analytical and routine tasks, the most valuable human skills become those that machines cannot replicate. Reskilling efforts must focus on cultivating these uniquely human capabilities:

- Critical Thinking & Complex Problem-Solving: The ability to evaluate AI-generated outputs with healthy skepticism, ask insightful questions, and handle the exceptions and edge cases that autonomous systems will inevitably encounter.

- AI Literacy & Data Fluency: A foundational understanding of how AI models work, their inherent limitations (such as the potential for bias or "hallucinations"), and the ability to interpret data to provide context for AI agents.

- Ethical Oversight & Responsible AI Use: The capacity to act as the ethical guardian of AI applications, ensuring they are used fairly, transparently, and in alignment with organizational values and societal norms.

- Collaboration & Communication: The interpersonal skills needed to partner effectively with both AI agents and human colleagues in newly designed, dynamic workflows.

- Create Personalized Learning Pathways: A one-size-fits-all training program is destined to fail. Organizations should leverage AI-powered learning platforms to create tailored reskilling and upskilling roadmaps for different roles and individuals. This should be a blended approach that includes formal courses, project-based learning for hands-on experience, and flexible "micro-learning" modules that fit into the flow of work.

- Foster a Culture of Continuous Learning: The pace of AI evolution is relentless, meaning that today's cutting-edge skill has a shorter half-life than ever before. The only sustainable strategy is to transform into a true "learning organization." This requires leadership to champion and model continuous learning, create psychological safety for experimentation and failure, and build reward systems that recognize skill development, not just short-term performance.

Ultimately, the technology of agentic AI is evolving at a pace that makes training for any single tool a temporary fix. A purely technical reskilling strategy is a losing game. The most durable and valuable capability an organization can build is change agility—the individual and collective capacity to adapt to, learn from, and thrive in new and uncertain situations. A successful reskilling strategy must therefore focus on these meta-skills: teaching people how to learn, fostering a mindset of experimentation, and building the critical thinking frameworks needed to evaluate and adapt to any new technology that emerges. This is the only way to truly future-proof the workforce.

Conclusion: Measuring What Matters—Sustainable Impact Beyond Near-Term ROI

Scaling agentic AI is unequivocally a leadership and change management challenge, not merely a technical one. The technology is the catalyst, but the people are the medium through which its potential is either realized or rejected. Success stories like Monte Carlo and GE HealthCare demonstrate that the true power of AI is unlocked when it is designed to augment and amplify human expertise, freeing skilled professionals to do their best work. Conversely, cautionary tales like the controversy surrounding Hippocratic AI reveal the profound peril of an ROI-only mindset that ignores the foundational currency of human trust.

To navigate this new landscape, leaders must adopt a more holistic definition of success. A simple calculation of cost savings and productivity gains is insufficient to capture the true value—or risk—of enterprise-wide AI integration. A "Sustainable Impact Scorecard" for AI initiatives should be adopted, measuring success across a broader set of dimensions:

- Operational Resilience: How has AI improved the adaptability, elasticity, and robustness of our core processes, making the organization more resilient to disruption?

- Employee Engagement & Well-being: Have we measurably reduced tedious and dissatisfying work? Are employees reporting higher levels of job satisfaction and engagement?

- Innovation Velocity: Are our teams using the time and cognitive bandwidth reclaimed from routine tasks to develop new products, services, or process improvements?

- Stakeholder Trust: Are we actively measuring and maintaining high levels of trust with our customers, patients, and partners in all human-AI interactions?

- Organizational Learning Rate: How effectively are we upskilling our workforce? How quickly and safely are we able to absorb and deploy new AI capabilities as they emerge?

The role of a leader in the agentic age is not simply to be a sponsor of AI projects. It is to be the chief architect of a new organizational culture—one that embraces human-AI collaboration as its central operating principle, prioritizes trust and ethical responsibility as non-negotiable foundations, and invests in its people as the ultimate and most enduring source of competitive advantage.

Ready to navigate the complexities of AI integration and build a future-proof workforce? Contact Alpha Technical Solutions today for a strategic consultation on scaling agentic AI for sustainable impact in your organization.