Introduction: The New Competitive Imperative—Moving from AI Adoption to AI Trust

Artificial intelligence (AI) represents a paradigm shift in technology, a general-purpose force reshaping how we work, interact, and live at a pace unseen since the deployment of the printing press six centuries ago. For organizations, the race to harness AI's potential for efficiency, innovation, and growth is well underway.

However, a narrow focus on technological capability alone is a perilous strategy. The next, more durable frontier of competitive advantage lies not in merely adopting AI, but in earning and maintaining stakeholder trust through its responsible and ethical application. Without robust ethical guardrails, AI systems risk becoming powerful vectors for amplifying real-world biases, fueling societal divisions, and threatening fundamental human rights and freedoms. These risks are not abstract; they manifest as profound legal, reputational, and operational liabilities for businesses.

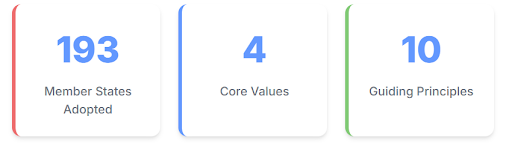

In this high-stakes environment, the era of self-regulation, often prioritizing commercial and geopolitical objectives over people, is definitively ending. A new global consensus is emerging, one that demands the establishment of institutional and legal frameworks to govern AI for the public good. At the forefront of this global movement is the United Nations Educational, Scientific and Cultural Organization (UNESCO). Its Recommendation on the Ethics of Artificial Intelligence, adopted by all 193 Member States in November 2021, stands as the preeminent global framework for building this trust-based advantage. This document is far more than a set of high-minded ideals; it is a strategic blueprint for organizations navigating their AI transformation.

The unanimous adoption of the Recommendation is a powerful leading indicator of future national and regional legislation. The text explicitly recommends that Member States apply its provisions by taking all necessary steps, "including whatever legislative or other measures may be required", to give them effect. This signals an inevitable global tide of regulation.

Organizations that proactively align with this framework today are not simply adopting best practices; they are pre-emptively complying with the future regulatory landscape. This confers a significant first-mover advantage, reducing future compliance costs, minimizing the risk of expensive and disruptive retrofitting of non-compliant systems, and cementing the organization's reputation as a trusted, forward-thinking leader in the digital economy.

Ultimately, embracing the UNESCO framework requires a fundamental cultural shift within an organization. The traditional engineering and product development mindset, often focused on technical feasibility—"Can we build this?"—is no longer sufficient. The Recommendation, by centering its entire structure on human rights, dignity, and well-being, forces a transition to a more critical question: "Should we build this, and if so, how can we do so responsibly?"

This evolution from a purely technical to a socio-technical perspective is the most crucial and demanding aspect of a mature AI transformation. Organizations that successfully navigate this cultural change will not only innovate more responsibly but will also build more resilient products and avoid the kind of high-profile ethical failures that have inflicted lasting damage on other firms. This report provides a comprehensive analysis of the UNESCO Recommendation and a practical guide for leadership to architect this ethical advantage.

1. Decoding the Global Consensus: An Overview of the UNESCO Recommendation on the Ethics of AI

To leverage the UNESCO Recommendation as a strategic asset, leaders must first understand its structure and logic. It is an interconnected system designed to guide action from high-level values down to specific policy implementation.

1.1. A Landmark Achievement: The First Global Standard-Setting Instrument

The Recommendation represents a historic milestone: the first-ever global standard-setting instrument on the ethics of AI. Its adoption by 193 countries establishes a universal normative framework, providing authoritative guidance for policymakers, corporations, and all other stakeholders involved in the AI lifecycle. The document's explicit purpose is to lay the foundations for future regulatory instruments, ensuring that as AI technology evolves, its governance does as well.

Its scope is intentionally comprehensive, addressing the ethical implications of AI across UNESCO's mandate areas of education, science, culture, and communication. Critically, it provides ethical guidance to all "AI actors," a term that encompasses governments, private sector companies, researchers, and civil society, making it directly relevant to the enterprise. To ensure its longevity, the Recommendation adopts a "dynamic understanding of AI," defining it broadly as systems with the capacity to process data in a way that resembles intelligent behavior. This flexible definition prevents the framework from becoming obsolete as technology advances, making it a future-proof guide for long-term strategic planning.

1.2. The Ethical Compass: The Four Core Values

At the heart of the Recommendation are four core values. These serve as the ultimate ethical compass, the fundamental tests against which any AI system or application must be measured. They represent the "why" behind the framework, defining the overarching goals that ethical AI must serve.

- Respect, Protection, and Promotion of Human Rights, Fundamental Freedoms, and Human Dignity: This is the non-negotiable cornerstone of the entire framework. It asserts that AI must serve humanity, and that fundamental rights are inviolable.

- Environment and Ecosystem Flourishing: This value establishes a commitment to ecological responsibility. AI systems should not harm the environment and, where possible, should be leveraged to promote sustainability and address environmental challenges.

- Ensuring Diversity and Inclusiveness: The framework demands that AI actively promotes diversity and inclusion. Its benefits must be shared equitably across all populations, and systems must be designed to avoid perpetuating or exacerbating discriminatory biases against any group.

- Living in Peaceful, Just, and Interconnected Societies: This value underscores the role of AI in fostering social harmony, justice, and equity, ensuring that the technology contributes to a more stable and interconnected world.

1.3. The Foundational Pillars: The Ten Guiding Principles

If the values are the "why," the ten guiding principles are the "what." They translate the abstract values into actionable, human-rights-centered guidelines for AI governance and system design. These principles define the essential attributes of a trustworthy AI system.

- Proportionality and Do No Harm: The use of AI must be appropriate and necessary to achieve a legitimate aim. It must not venture into excessive or dangerous applications that go beyond the stated purpose. This requires rigorous risk assessment to prevent harm. For businesses, this principle guards against "technology for technology's sake," ensuring that AI solutions are fit-for-purpose and do not introduce disproportionate risk.

- Safety and Security: AI actors must proactively identify, address, and mitigate unwanted harms (safety risks) and vulnerabilities to attack (security risks) throughout the entire AI lifecycle. This is a core function of enterprise risk management.

- Fairness and Non-Discrimination: AI systems must be designed and deployed to actively promote social justice, fairness, and non-discrimination. This involves tackling digital divides and minimizing bias to ensure inclusive access to AI's benefits. This is critical for legal compliance, brand reputation, and market access.

- Sustainability: The full societal, cultural, economic, and environmental impacts of AI systems must be holistically assessed and managed for long-term sustainability, aligning with goals like the UN's Sustainable Development Goals. This directly connects AI governance to broader corporate ESG (Environmental, Social, and Governance) objectives.

- Right to Privacy and Data Protection: Privacy, a fundamental human right, must be protected and promoted throughout the AI lifecycle. This necessitates the establishment of adequate data protection frameworks and robust data governance mechanisms. This is a foundational requirement for legal compliance with regulations like the GDPR and for building customer trust.

- Human Oversight and Determination: Member States and organizations must ensure that AI systems do not displace ultimate human responsibility and accountability. Humans must always retain the ability to oversee, question, override, or shut down an AI system, especially in high-stakes situations. This principle is a crucial safeguard against catastrophic failure and loss of control.

- Transparency and Explainability (T&E): The ethical deployment of AI depends on stakeholders being able to understand how systems operate and why they produce certain outcomes. The level of T&E should be appropriate to the context, as there can be tensions between transparency and other principles like privacy or security. T&E is the bedrock of accountability and trust.

- Responsibility and Accountability: Clear accountability mechanisms must be established. AI actors are responsible and accountable for the outcomes of their systems. This requires auditable and traceable systems, along with mechanisms for oversight and due diligence.

- Awareness and Literacy: Public and employee understanding of AI technologies and data should be promoted through accessible education and training. An informed workforce and citizenry are the first line of defense against the misuse of AI.

- Multi-stakeholder and Adaptive Governance & Collaboration: AI governance must be inclusive, involving diverse stakeholders from government, the private sector, academia, and civil society. Furthermore, governance structures must be flexible and adaptive to keep pace with rapid technological development. This approach prevents organizational groupthink and ensures the continued relevance of ethical frameworks.

1.4. From Principles to Practice: The Eleven Policy Action Areas

What makes the UNESCO Recommendation exceptionally applicable and practical are its eleven extensive Policy Action Areas. These areas represent the "how" and "where" of implementation, providing concrete domains in which organizations and governments can translate the values and principles into tangible action. For businesses embarking on AI transformation, several of these areas are of immediate strategic importance:

- Policy Area 1: Ethical Impact Assessment (EIA): This mandates the use of EIAs as a proactive tool to identify, assess, and mitigate the potential impacts of an AI system on human rights, the environment, and the core values before deployment.

- Policy Area 3: Data Policy: This calls for governance that goes beyond basic compliance to ensure data quality, security, privacy, and user control throughout the data lifecycle.

- Policy Area 5: Gender: This policy area requires concrete action to advance gender equality, calling for gender-responsive schemes, the development of gender action plans within digital policies, and incentives to promote the full participation of girls and women in all stages of the AI lifecycle.

- Policy Area 6: Environment and Ecosystems: This encourages the development and use of AI solutions for environmental protection, sustainability, and disaster resilience, while also demanding assessment of the environmental footprint of AI technologies themselves.

- Other Critical Areas: The framework also provides detailed policy guidance for Culture (addressing the impact of AI on language and heritage), Education and Research, Communication and Information (combating disinformation), Economy and Labor (ensuring fair labor transitions), and Health and Social Well-being.

It is essential to view these three components—Values, Principles, and Policy Areas—not as a menu of disconnected options but as a deeply integrated and logical system:

The Values (the "Why") establish the ultimate ethical purpose.

The Principles (the "What") define the necessary characteristics of a system that can achieve that purpose.

The Policy Areas (the "How" and "Where") provide the concrete domains for implementing the principles and measuring their adherence against the values.

For instance, the Value of "Ensuring Diversity and Inclusiveness" is operationalized through the Principle of "Fairness and Non-Discrimination." This principle is then applied within the Policy Area of "Economy and Labor" (e.g., in an AI-powered hiring tool) and its potential risks are proactively evaluated using an "Ethical Impact Assessment."

Understanding this nested, hierarchical logic is the key to implementing the framework holistically and effectively, rather than through a piecemeal approach that is destined to fail.

2. The Strategic Value Proposition: Why the UNESCO Framework is a Non-Negotiable for Your Business

While the ethical case for responsible AI is clear, the business case is equally compelling. Adopting the UNESCO Recommendation is not an act of corporate altruism; it is a strategic imperative that de-risks investment, builds tangible assets of trust, and positions an organization for long-term, sustainable success in the AI era.

2.1. De-Risking Your AI Investment: Mitigating Legal, Reputational, and Operational Peril

The development and deployment of AI systems introduce a new spectrum of significant risks. The UNESCO framework provides a comprehensive and globally endorsed model for identifying, assessing, and systematically mitigating these risks throughout the AI lifecycle.

- Legal and Compliance Risk: The Recommendation is explicitly based on international law and human rights principles and is designed to guide and inform the creation of future national and regional regulations. As governments worldwide move from asking whether to regulate AI to how, early and robust alignment with the UNESCO framework becomes the most effective defense against future legal challenges, regulatory scrutiny, and potentially crippling fines under emerging legislation like the EU AI Act. It transforms compliance from a reactive, costly scramble into a proactive, strategic posture.

- Reputational Risk: In a hyper-connected world, a single ethical failure can cause swift and irreversible damage to a brand's reputation and market value. High-profile incidents, such as Amazon's biased recruiting tool that systematically disadvantaged female candidates or privacy violations stemming from the misuse of personal data, serve as cautionary tales. The framework's foundational principles of Fairness, Non-Discrimination, Privacy, and Transparency are a direct and powerful antidote to these reputational poisons, safeguarding the brand equity that takes decades to build.

- Operational Risk: AI systems that are opaque "black boxes", insecure, or unreliable are significant operational liabilities. They can produce erroneous outputs, fail in unpredictable ways, and be vulnerable to malicious attacks. The principles of Explainability, Safety, Security, and Human Oversight directly address these operational vulnerabilities. They ensure that systems are robust, dependable, auditable, and ultimately under human control, thereby protecting core business processes from disruption and failure.

2.2. Building an Unshakeable Foundation of Trust with Customers, Employees, and Regulators

In the digital economy, trust is not a soft metric; it is a hard asset that drives customer loyalty, employee engagement, investor confidence, and regulatory goodwill. The UNESCO framework is a practical blueprint for architecting this trust at every level of the organization.

- Customer Trust: Consumers are increasingly sophisticated and skeptical about how their data is used and how algorithmic decisions are made. They are far more likely to engage with, purchase from, and remain loyal to brands that they perceive as using AI fairly, ethically, and transparently. The principles of Transparency and Explainability are particularly crucial here. When a customer understands why they were offered a particular product or why a decision was made about their application, skepticism is reduced, and adoption of AI-powered services increases.

- Employee Trust: The success of any internal AI transformation depends on workforce buy-in. Employees are more likely to embrace and effectively utilize new AI tools when they believe the systems are designed to be equitable, supportive, and empowering, rather than biased, exploitative, or purely for surveillance. Fostering this internal trust is critical for accelerating adoption, improving productivity, and retaining top talent in a competitive market.

- Regulator and Investor Trust: In an environment of growing regulatory scrutiny, proactive and demonstrable adherence to a globally recognized ethical standard like the UNESCO Recommendation sends a powerful signal of responsible governance. It shows regulators that the organization is a serious and collaborative partner in navigating the complexities of AI. For investors, it indicates a mature approach to risk management and a commitment to sustainable, long-term value creation. Recognizing this, UNESCO is actively working with companies and the investment community to build a global dataset that can drive and reward transparent, responsible AI adoption.

2.3. The UNESCO Business Council: A Platform for Collaboration and Co-creation

Crucially, organizations are not expected to undertake this journey in isolation. UNESCO has actively engaged the private sector by establishing the UNESCO Business Council for the Ethics of AI, a vital platform for collaboration, exchanging best practices, and collectively shaping the future of AI governance.

Co-chaired by industry leaders such as Microsoft and Telefonica, the Council provides a forum for companies to work directly with UNESCO on the practical implementation of the Recommendation. Its activities include co-designing and refining critical tools like the Ethical Impact Assessment (EIA), providing industry insights to inform the development of intelligent regional regulations, and fostering a competitive environment where ethical practices are a shared standard. Membership is open to any private sector entity willing to endorse the Recommendation and actively participate in its mission. This initiative provides an unparalleled opportunity for businesses to not only learn from their peers but also to contribute to the very standards by which they will be governed.

The existence of these practical implementation tools and collaborative bodies underscores a critical strategic point. It is no longer sufficient for a company to simply publish a set of internal ethics principles and declare its commitment to responsible AI. The new, higher standard of care is the ability to demonstrate adherence to a comprehensive, global framework. This means moving beyond claims to evidence. The organizations that will win the trust of stakeholders are those that can "show their work"—by conducting and documenting Ethical Impact Assessments, by participating in multi-stakeholder dialogues like the Business Council, and by being transparent about their governance processes and the steps they are taking to align with global norms. This demonstrable commitment to ethical practice is what builds deep, defensible, and lasting trust.

3. The Implementation Blueprint: Architecting an Enterprise AI Governance Framework

Translating the UNESCO Recommendation from principle to practice requires a deliberate and structured approach to governance. An effective AI governance framework is not a single policy document but a holistic system encompassing people, processes, and technology, designed to embed ethical considerations into the very fabric of the organization.

3.1. The People Pillar: Establishing Human-Centric Oversight

Effective AI governance is, at its core, a human endeavor. Technology alone cannot ensure ethical outcomes. Success depends on clear leadership, dedicated and empowered oversight bodies, and a well-educated workforce.

3.1.1. The Role of Leadership in Cultivating an Ethical AI Culture

The ultimate responsibility for an organization's ethical posture rests with its senior leadership. The CEO and C-suite must set a clear and unambiguous tone from the top, championing the adoption of the AI ethics framework as a strategic priority. This goes beyond mere endorsement. Leadership must actively invest in the necessary resources, including comprehensive training programs; establish and enforce clear internal policies; and, most importantly, foster a culture of psychological safety and open dialogue.

Employees at all levels must feel empowered to raise ethical questions and dilemmas without fear of reprisal. This commitment from leadership is the essential catalyst for embedding ethics into the corporate DNA and moving from a culture of compliance to one of conscience.

3.1.2. Chartering Your AI Ethics Committee (or Review Board)

The central operational hub for AI governance is a dedicated AI Ethics Committee or Review Board. This body is not a philosophical debating society; it is a critical risk management function with a clear mandate to provide strategic oversight, review high-impact AI projects, and ensure all AI initiatives align with the organization's ethical principles and policies.

- Composition: To be effective, the committee must be cross-functional and diverse. It is a mistake to view AI ethics as a purely technical or legal issue. The committee should include representatives with expertise from data science, engineering, legal, compliance, human resources, Diversity, Equity, and Inclusion (DEI), product management, and front-line business units who understand the real-world context of AI deployments. Including external advisors, such as academic ethicists or civil society representatives, can provide valuable independence and critical perspective.

- Authority: The committee must have real authority. Its role cannot be merely advisory; it must be empowered to pause or even halt the deployment of an AI system when significant ethical concerns are identified and unresolved. Without this authority, the committee risks becoming a symbolic "ethics-washing" exercise.

- Operations: The committee's work should be structured and systematic. It should operate on a risk-based review model, focusing its deep-dive assessments on high-risk systems that affect human rights, legal standing, or access to opportunities. It needs standardized workflows for project submission, including requirements for documentation like an AI Impact Review and fairness testing results. Crucially, its oversight responsibilities must extend beyond pre-deployment review to include post-deployment monitoring of model performance and incident trends, with mechanisms to trigger re-review if a system's behavior changes significantly.

3.1.3. Building Organizational Capacity: A Strategy for Enterprise-Wide AI Ethics Training

An empowered committee and a strong leadership tone are necessary but not sufficient. The entire organization must be equipped with the knowledge to use AI responsibly. A comprehensive training strategy is therefore a critical pillar of governance.

- Necessity: Training is the primary mechanism for mitigating risk at the individual employee level. It ensures compliance with internal policies and external regulations, and it empowers employees to leverage AI tools productively while avoiding common pitfalls like over-reliance on automated systems or perpetuating bias.

- Curriculum: Foundational AI literacy—understanding what AI is and its basic concepts—is the starting point. However, the core of the curriculum must focus on the ethical principles outlined in the UNESCO framework: fairness, bias detection, transparency, accountability, and privacy. Training must also cover the specifics of the organization's internal AI policies, data security protocols, and guidelines on acceptable use, including the critical rule that non-public, confidential, or proprietary information must never be entered into public AI models like ChatGPT.

- Format and Delivery: One-size-fits-all training is ineffective. The content and format must be tailored to the specific audience; developers require deep technical training on bias mitigation techniques, while business leaders need a strategic overview of risks and opportunities. The most effective training moves beyond theoretical lectures to include hands-on workshops, real-world case studies, and interactive, story-based scenarios that allow employees to practice ethical decision-making in a safe environment. Finally, because AI technology and its ethical implications are constantly evolving, training cannot be a one-time event. It must be a continuous program with regular updates and refreshers.

3.2. The Process Pillar: Embedding Ethics into the AI Lifecycle

The second pillar of governance involves creating formal processes and policies that embed ethical considerations into every stage of the AI lifecycle, from ideation and data collection to deployment, monitoring, and eventual retirement.

3.2.1. Proactive Risk Management with the Ethical Impact Assessment (EIA)

The Ethical Impact Assessment (EIA) is a core procedural tool mandated by the UNESCO Recommendation. It is a structured, proactive process designed to force a systematic consideration of a potential AI project's impact before it is built and deployed. The EIA's purpose is to identify, assess, and plan mitigation for potential negative impacts on human rights, fairness, privacy, the environment, and the other core values of the framework. Organizations should adopt or adapt UNESCO's formal EIA methodology and make its completion a mandatory gateway for any new AI project, particularly those classified as high-risk. The EIA should not be treated as a one-time, check-the-box exercise but as a living document that is revisited and updated as the system evolves and new impacts are understood.

3.2.2. Implementing Robust Data Governance for Privacy and Security

Since data is the lifeblood of AI, its ethical and secure management is a non-negotiable foundation of AI governance. A robust data governance program is essential for ensuring the quality, integrity, privacy, and security of the data used to train and operate AI systems.

- Data Quality and Integrity: The principle of "garbage in, garbage out" is amplified in AI. Low-quality, incomplete, or non-representative data is a primary cause of biased and inaccurate model outcomes. Effective governance requires managing the entire data lifecycle, from responsible sourcing and collection to secure storage, controlled access, and regulated deletion practices.

- Privacy and Security: Organizations must implement stringent data security standards, including encryption, access controls, and regular vulnerability testing, to protect against data breaches and cyberattacks. All data handling must comply with applicable data protection laws, such as the GDPR in Europe. A critical and simple rule must be enforced enterprise-wide: sensitive, confidential, or proprietary corporate or customer data must never be input into public, third-party generative AI tools.

3.2.3. Crafting and Enforcing an Internal AI Ethics Policy

While the UNESCO framework provides the "why" and "what," a formal internal AI Ethics Policy provides the "how" for employees on a day-to-day basis. This document translates the high-level principles into concrete, enforceable rules of conduct.

- Key Components: A comprehensive policy must clearly define its purpose and scope (i.e., who and what it applies to). It should outline acceptable and prohibited use cases for AI tools. It must detail strict rules regarding data privacy, security, and intellectual property. It needs to clarify employee responsibilities, such as the need to review and validate AI outputs and report concerns. The policy should also establish a formal process for the procurement and approval of third-party AI tools and define the consequences for non-compliance.

- Integration: The AI policy should not exist in a silo. It must be explicitly linked to and consistent with other core corporate policies, such as the Code of Conduct, Anti-Harassment/EEO policies, and Confidential Information policies. Drawing on the public principles of leading technology firms like Google and IBM, which emphasize transparency, fairness, and accountability, can provide a valuable starting point for drafting.

A common fear among business leaders is that this level of governance will stifle the speed and creativity essential for innovation. However, this perspective misreads the nature of modern technological development. A well-designed AI governance framework does not act as a brake but as a set of guardrails that enables and accelerates responsible innovation. By providing development teams with clear ethical boundaries, risk assessment processes, and data handling protocols, governance gives them the confidence and psychological safety to experiment and build safely. It prevents costly ethical missteps and technical debt that can derail projects late in their lifecycle, forcing expensive redesigns or outright cancellation. By building stakeholder trust from the outset, it also creates greater organizational buy-in for deploying new AI systems.

Therefore, leaders should reframe AI governance not as a bureaucratic cost center, but as a strategic investment that de-risks, accelerates, and improves the outcomes of the entire AI portfolio.

4. Deep Dive—Operationalizing Key Principles in High-Stakes Environments

While all ten UNESCO principles are important, some present greater practical challenges for organizations. This section provides a granular, operational deep dive into two of the most critical and complex principles: Transparency and Explainability, and Fairness and Non-Discrimination, using high-stakes business contexts as case studies.

4.1. Illuminating the Black Box: A Guide to Achieving Meaningful Transparency and Explainability (T&E)

The principles of Transparency and Explainability (T&E) are the bedrock of trust and accountability in AI systems. Without them, users, customers, and regulators are left in the dark, unable to understand or challenge algorithmic decisions. For businesses, mastering T&E is not merely a technical challenge; it is a fundamental communication and trust-building imperative.

Distinguishing the Concepts

While often used interchangeably, transparency and explainability are distinct but related concepts.

- Transparency answers the question, "What happened?" It involves being open about the AI system's existence and general function. Key elements of transparency include proactively informing an individual that they are interacting with an AI system (e.g., a chatbot), that content was AI-generated, or that a decision was made using AI. It also includes providing information about the system's general design and the types of data used in its training.

- Explainability answers the question, "Why did it happen?" It is the ability to provide a human-comprehensible justification for a specific decision or output made by the AI system. It details the key factors and logic that led to a particular outcome, making the model's reasoning interpretable.

The Black Box Problem

Achieving meaningful explainability is one of the most significant challenges in modern AI.

Many of the most powerful and accurate models, particularly those based on deep learning and neural networks, are inherently complex and opaque. Their internal decision-making processes can involve millions or billions of parameters interacting in non-linear ways, making it exceedingly difficult even for their creators to trace exactly why a specific input led to a specific output. This "black box" nature creates a fundamental tension between model performance and interpretability, posing a major ethical and operational hurdle.

Practical Implementation for Customer-Facing Models

Despite these challenges, organizations can and must take concrete steps to deliver meaningful T&E, especially in high-stakes, customer-facing scenarios.

- Proactive and Clear Notification: The first step is always transparency. Organizations must establish an unwavering policy to clearly and simply inform users whenever they are interacting with an AI system or when a decision that affects their rights or opportunities was significantly informed by one. This includes customer service chatbots, personalized recommendation engines, and automated application screening processes.

- Plain-Language Explanations for High-Stakes Decisions: For decisions with significant consequences—such as the denial of a loan application, an insurance claim, or a job interview—a simple notification is insufficient. The organization must be prepared to provide a plain-language explanation of the decision. This explanation should avoid technical jargon and focus on the primary factors that influenced the outcome. For example, the fintech company ZestFinance, which uses AI for creditworthiness assessment, provides applicants with detailed reasons for its lending decisions, a practice that both builds customer trust and empowers applicants to improve their financial standing.

- Building Trust Through Control and Recourse: Meaningful T&E is not just about one-way information delivery; it is about empowering the individual. The public should have the opportunity to seek clarification from a responsible human agent and understand the basis of any decision that affects them. Providing clear channels for appeal, review, and correction of AI-driven decisions is essential for building trust and ensuring accountability. This level of transparency and engagement reduces customer skepticism, enhances satisfaction, and ultimately drives wider and more confident adoption of AI-powered services.

4.2. Upholding Fairness and Non-Discrimination: A Case Study in AI-Powered Recruitment

Hiring is one of the most high-stakes and ethically fraught domains for AI application. An individual's access to employment is a fundamental economic right, and the use of biased AI tools in recruitment can perpetuate systemic inequalities, damage brand reputation, and create significant legal liability under anti-discrimination laws. This makes AI-powered hiring a critical test case for an organization's commitment to the principle of Fairness and Non-Discrimination.

The Root Causes of Algorithmic Bias in Hiring

Understanding how bias enters hiring algorithms is the first step toward mitigating it. The causes are often subtle and systemic.

- Biased Training Data: This is the most common source of bias. If an AI model is trained on an organization's historical hiring data, and that data reflects past societal or institutional biases (e.g., a historical preference for male candidates in technical roles), the model will learn and codify those biases. The algorithm will not learn to be fair; it will learn to replicate the patterns it is shown, potentially amplifying the original discrimination at scale. The canonical example remains Amazon's experimental recruiting tool, which had to be scrapped after it was found to penalize resumes containing the word "women's" and systematically favor male candidates because it was trained on a decade of predominantly male resumes.

- Flawed Proxies and Indirect Discrimination: A naive approach to fairness is "fairness through unawareness," which involves simply removing protected attributes like race or gender from the training data. This approach is notoriously ineffective. AI systems are exceptionally good at finding correlations, and they can easily use other, seemingly neutral data points as proxies for the protected attribute. For example, an algorithm could learn to associate attendance at a women's college, participation in certain affinity groups, or living in a particular zip code with gender or race, leading to indirect discrimination that is just as harmful.

- Biased Label Definitions: Bias can be embedded in the very definition of the "successful outcome" the model is trained to predict. For instance, a company might define a "good hire" as an employee who has a long tenure at the company. However, if historical data shows that women have had shorter tenures due to systemic factors like inadequate parental leave policies or a lack of promotion opportunities, training a model to predict "tenure" will inherently bias the system against female applicants.

A Multi-Pronged Mitigation Strategy

Given the complexity of bias, there is no single silver-bullet solution. Organizations must adopt a multi-pronged strategy that combines technical interventions with robust human oversight.

- Pre-processing Techniques: These methods focus on fixing the data before it is fed to the model. This can involve techniques like reweighting data points to give more importance to underrepresented groups or resampling the dataset to create a more balanced representation.

- In-processing Techniques: These methods build fairness constraints directly into the model's training process. The algorithm is optimized not just for accuracy but also for a chosen fairness metric, forcing it to learn less discriminatory patterns.

- Post-processing Techniques: These methods adjust the model's outputs after a prediction has been made. For example, one could set different decision thresholds for different demographic groups to equalize selection rates.

- Human-in-the-Loop Oversight: Ultimately, technology is a tool to assist, not replace, human judgment in complex decisions like hiring. The UNESCO principle of Human Oversight and Determination is paramount. AI can be used to screen for skills and identify a diverse pool of candidates, but the final decisions regarding interviews and offers must remain in the hands of trained human recruiters and hiring managers who can apply context, nuance, and ethical judgment.

5. Charting Your Course: A Phased Roadmap to Ethical AI Maturity

Implementing the UNESCO framework is a strategic journey, not a one-time project. It requires a phased, systematic approach to build foundational capabilities, develop and integrate governance structures, and foster a culture of continuous improvement. A phased roadmap allows an organization to manage this complex transformation in a deliberate and achievable manner, ensuring that each stage builds upon the last.

This approach allows for the systematic development of organizational capacity, the thoughtful integration of new processes, and the cultivation of an ethical AI culture over time. The following table and outline synthesizes the guidance from the UNESCO Recommendation and best practices in corporate governance into a tangible, actionable plan. It is designed to be used by leadership to structure their implementation efforts, assign responsibilities, allocate resources, and track progress toward achieving ethical AI maturity.

Phase 1: Foundation & Assessment (Months 1–3)

- Key Activities

- Secure leadership buy-in; C-suite formally endorses UNESCO framework as strategic priority.

- Form a cross-functional steering committee with executive sponsorship.

- Conduct AI inventory and high-level risk assessment (focus on critical areas like hiring, customer data).

- Launch mandatory enterprise-wide AI literacy training covering fundamentals and UNESCO principles.

- Primary Stakeholders

- C-Suite, Board of Directors, Chief Legal Officer, Chief Information/Technology Officer, Head of Risk Management.

- Necessary Resources

- Executive sponsorship and strategic communication.

- Initial budget for training platforms and content.

- Dedicated time from senior leaders for steering committee work.

- Measurable Outcomes

- AI risk register created and prioritized.

- Steering committee charter approved.

- ≥80% of employees complete foundational AI literacy training.

Phase 2: Framework Development & Integration (Months 4–9)

- Key Activities

- Formally charter the AI Ethics Committee (clear authority, membership, procedures).

- Draft and ratify internal AI Ethics Policy with stakeholder feedback.

- Develop and pilot Ethical Impact Assessment (EIA) process on 1–2 medium/high-risk projects.

- Develop and deploy advanced, role-specific training for key groups (data scientists, HR, product managers).

- Primary Stakeholders

- AI Ethics Committee, HR, Legal & Compliance, Data Science & Engineering, Product Management, Internal Communications.

- Necessary Resources

- Time allocation for committee duties.

- Legal/expert review of policy and EIA process.

- Investment in learning management systems for role-specific training.

- Measurable Outcomes

- AI Ethics Committee operational with regular meetings.

- Internal AI Ethics Policy published and accessible.

- At least two EIAs completed and reviewed.

- Key high-risk department personnel complete role-specific training.

Phase 3: Scaling & Continuous Improvement (Months 10+)

- Key Activities

- Make EIAs mandatory for all new high-risk AI projects.

- Implement continuous monitoring/auditing for deployed AI (drift, bias, security).

- Establish feedback channels for reporting ethical concerns; update policy and EIA regularly.

- Engage with external AI ethics ecosystem (e.g., UNESCO Business Council).

- Primary Stakeholders

- All Business Units, Internal Audit, External Relations, Procurement, Customer Support.

- Necessary Resources

- Budget for monitoring/auditing tools.

- Resources for managing feedback and policy updates.

- Resources for external engagement (fees, travel).

- Measurable Outcomes

- 100% of new high-risk AI systems have documented/approved EIAs.

- Quarterly/semi-annual audit reports produced and reviewed by leadership.

- AI Ethics Policy reviewed/updated at least annually.

- Organization joins or actively participates in an external AI ethics body.

Conclusion: The Ethical Advantage in the Age of AI

The relentless advance of artificial intelligence has fundamentally altered the strategic landscape for every organization. It has become unequivocally clear that ethical governance is no longer an academic discussion or a peripheral corporate social responsibility initiative; it is an urgent and central strategic priority.

The risks associated with ungoverned AI—legal jeopardy, reputational ruin, and operational failure—are too significant to ignore. In this complex and rapidly evolving environment, the UNESCO Recommendation on the Ethics of Artificial Intelligence offers a comprehensive, practical, and globally endorsed blueprint for navigating the path forward.

Organizations that choose to proactively and holistically embrace this framework will achieve far more than mere risk mitigation. By embedding the principles of fairness, transparency, accountability, and human dignity into their technology and culture, they will build a profound and durable foundation of trust with their customers, employees, investors, and regulators. This trust is the ultimate competitive advantage in the digital age. It fosters responsible innovation, unlocks greater value from AI investments, and builds the organizational resilience needed to thrive amidst technological disruption.

The journey to trustworthy AI is not simple.

It is a sustained commitment to embedding human values into the technological core of the enterprise. It is a challenge that demands decisive leadership, a shift in corporate culture, and the deliberate construction of new governance processes. The path requires investment, diligence, and a willingness to prioritize long-term integrity over short-term expediency. Yet, the rewards for those who undertake this journey are immense. The resilience, loyalty, and sustainable growth that stem from a deep commitment to ethical AI will define the leading and most respected organizations of the next decade.

Sources:

- https://www.unesco.org/en/artificial-intelligence/recommendation-ethics

- https://www.unesco.org/en/articles/unescos-recommendation-ethics-artificial-intelligence-key-facts

- https://www.caidp.org/events/unesco/

- https://www.unesco.org/en/artificial-intelligence

- https://www.dataguidance.com/opinion/international-unesco-recommendation-ethics

- https://unesdoc.unesco.org/ark:/48223/pf0000376713

- https://aiexponent.com/navigating-global-ai-ethics-a-practical-guide-to-the-unesco-recommendation/

- https://www.markovml.com/blog/ethical-ai

- https://www.ibm.com/think/topics/ai-governance

- https://www.unesco.org/en/articles/recommendation-ethics-artificial-intelligence

- https://www.researchgate.net/publication/374234687_Ten_UNESCO_Recommendations_on_the_Ethics_of_Artificial_Intelligence

- https://tsaaro.com/blogs/ai-ethics-on-the-global-stage-a-deep-dive-into-unescos-recommendation-on-the-ethics-of-artificial-intelligence/

- https://osf.io/csyux/download

- https://unsceb.org/sites/default/files/2022-09/Principles%20for%20the%20Ethical%20Use%20of%20AI%20in%20the%20UN%20System_1.pdf