Introduction: The Productivity Revolution Will Not Be Typed

For decades, the keyboard and mouse have been the undisputed conduits of professional productivity. They are familiar tools, yet they are also relics of a bygone computing era, imposing a fundamental and often unrecognized limit on the speed of business.

We have become so accustomed to the friction of translating thoughts into text through our fingertips that we fail to see the inefficiency inherent in the process. In this article I argue that we are at a historic inflection point. The convergence of two powerful artificial intelligence technologies—near-human accuracy Automatic Speech Recognition (ASR) and the sophisticated structuring capabilities of Large Language Models (LLMs)—has unlocked voice as the new, superior operating system for business. This is not a future prediction; it is a present-day reality that businesses ignoring this shift do so at their own peril.

This analysis will first establish the fundamental "why" behind this shift by examining the human input/output problem. It will then detail the technological catalysts that have made this revolution possible now. Following this, the report will analyze the profound paradigm shift in user interaction, explore transformative business use cases with quantifiable ROI, and conclude with a practical framework for adoption.

Section 1: The Bandwidth Imperative: Moving at the Speed of Thought

1.1 The Quantitative Case: The Inefficiency of Our Fingers

The single greatest limiting factor in translating thought into digital action for the majority of knowledge workers is the keyboard. The chasm between the speed of cognition and the speed of manual data entry represents a persistent bottleneck that throttles organizational output.

The data on this input/output inefficiency is unambiguous. The average typing speed for a professional is approximately 40 to 50 words per minute (wpm). In stark contrast, the average rate for conversational English speech is between 140 and 150 wpm. This represents a 3x to 4x increase in raw data throughput. While some expert typists can achieve speeds exceeding 200 wpm, they are the exception; for the vast majority of the workforce, speaking is an inherently higher-bandwidth channel for communication.

This differential is not merely a statistical curiosity; it represents a tangible "productivity tax" paid every time an employee composes an email, writes a report, or enters data into a system. A 1,000-word document, for example, which would take an average professional 20 to 25 minutes to type, could be dictated in roughly 7 minutes. When extrapolated across an entire organization, the cumulative time savings are monumental. Businesses that rely exclusively on typing are unknowingly throttling their own intellectual output, paying a constant, low-level friction cost measured in thousands of lost work-hours per year.

1.2 The Cognitive Case: Aligning with the Stream of Consciousness

Beyond the raw speed, the voice-first workflow aligns more naturally with the fundamental process of human ideation. The assertion that "we think more like we speak" finds strong support in cognitive science. Spoken language is often an ad-hoc, off-the-cuff, and unfiltered representation of our thoughts as they occur, closely mirroring what psychologists term the "stream of consciousness". Techniques like stream-of-consciousness journaling are used specifically to bypass the "inner critic" and mental barriers, allowing for a raw externalization of internal thoughts to reduce mental clutter and uncover subconscious ideas.

Conversely, the act of writing is a powerful but different cognitive tool. It facilitates critical thinking, improves memory, and supports conceptual learning precisely because it forces a slower, more deliberate process of analysis, reflection, and self-editing. The traditional process of typing a document forces two distinct and demanding cognitive tasks to occur simultaneously:

Idea Generation (conceiving the thought) and Idea Structuring (organizing, formatting, and refining the thought for a specific medium). This concurrent processing is inefficient and creates cognitive drag.

The new voice-AI workflow fundamentally changes this dynamic by decoupling these two tasks. Speaking into a transcription tool allows for a pure, high-bandwidth "brain dump" that focuses exclusively on generation. The cognitive load of structuring, formatting, and condensing that raw output is then offloaded to an LLM in a separate, subsequent step. This workflow does not eliminate the need for structured thought; it optimizes it. It empowers professionals to capture the raw, creative phase of ideation at maximum velocity and then apply the near-instantaneous power of AI to perform the laborious structuring tasks that would have previously inhibited the initial flow of expression.

Section 2: The Two-Part Catalyst: Why Now?

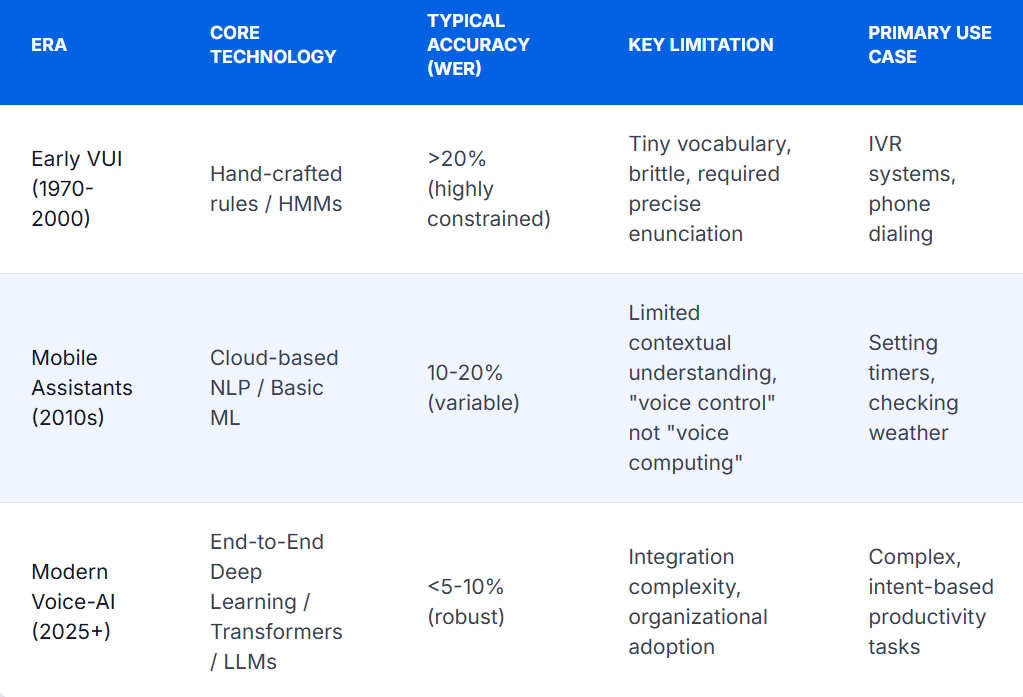

The concept of speaking to computers is not new, having been a staple of science fiction and a goal of researchers for over half a century. However, previous attempts failed to achieve widespread business adoption due to critical technological shortcomings. The current revolution is enabled by the simultaneous maturation of two distinct but complementary AI technologies.

2.1 Part A: The Accuracy Revolution in Speech Recognition

The history of Automatic Speech Recognition (ASR) is a story of slow progress followed by a dramatic, game-changing leap. The journey began in 1952 with Bell Labs' "AUDREY," a system that could only recognize spoken digits, followed by systems like IBM's "Shoebox" in 1962 with a mere 16-word vocabulary. Early commercial products of the 1990s, such as Dragon Dictate, were expensive, cumbersome, and required users to pause unnaturally between each word.

For decades, ASR accuracy was hamstrung by persistent challenges: distinguishing speech from background noise, understanding diverse accents, separating multiple speakers, and recognizing technical jargon. For a long time, accuracy plateaued at around an 80% Word Error Rate (WER), meaning one in every five words was incorrect. This was far short of the 96-98% accuracy of human transcriptionists and made the technology too frustrating for serious professional use.5 The effort required to correct a machine-generated transcript was often greater than the effort of typing it from scratch.

The inflection point arrived with the transition from older statistical methods, like Hidden Markov Models (HMMs), to modern, end-to-end deep learning architectures. Innovations such as the Transformer architecture, Connectionist Temporal Classification (CTC), and training on massive datasets—OpenAI's Whisper model, for instance, was trained on 680,000 hours of multilingual data—allowed models to learn the nuances of human speech without needing hand-crafted phonetic rules.

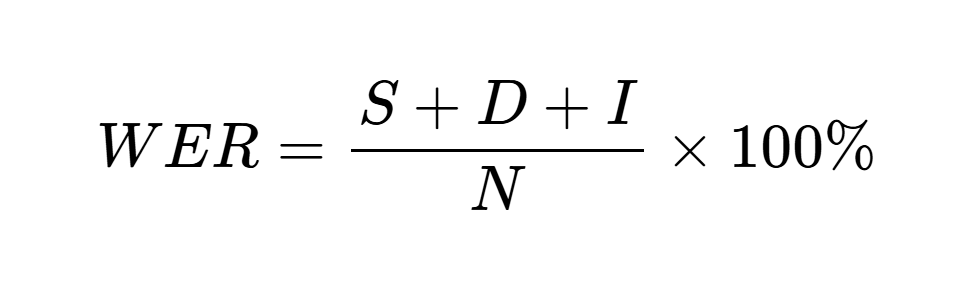

This breakthrough is quantifiable. Modern ASR systems now routinely achieve accuracy rates exceeding 95% in ideal conditions. The industry-standard metric, Word Error Rate (WER), is calculated as:

where S is substitutions, D is deletions, I is insertions, and N is the total number of words in the reference text. A WER below 5% is now considered near-human performance, a benchmark that leading models consistently meet or beat on clean audio datasets. More importantly, performance in real-world conditions has improved dramatically. For example, AssemblyAI has documented a 30% improvement in accuracy in noisy environments, and Deepgram's Nova-3 model boasts a 54% reduction in WER for streaming audio compared to its competitors.

Technology adoption often hinges on crossing a critical "usability threshold." For ASR, this is the point where the accuracy is so high that the cognitive friction of making corrections becomes less than the friction of typing. The leap from 80% accuracy (one error every five words) to over 95% accuracy (one error every 20 words) is not an incremental improvement; it is a phase change. It transforms the tool from a frustrating gimmick into a reliable productivity engine. Any business leader whose opinion of voice recognition was formed based on the technology of five or ten years ago is operating on dangerously outdated assumptions.

2.2 Part B: The Intelligence Revolution in Language Models

Near-perfect transcription, however, is only half of the solution. A flawless transcript of human speech is often a verbose, disorganized, and repetitive "stream of consciousness" that is not a finished business document. This is where the second catalyst, the Large Language Model (LLM), becomes indispensable.

LLMs excel at a task that can be described as serving as a "universal formatter." They can ingest unstructured, natural language text and, with simple instructions, reformat it into virtually any desired structure. The rapid advancements in the reasoning and instruction-following capabilities of LLMs make them the perfect tool to process raw ASR output. A single, 10-minute voice memo can be transformed into a multitude of finished products:

- A concise, professional email.

- A formal memorandum with headings and bullet points.

- A project proposal with sections for objectives, timeline, and budget.

- Structured data ready to populate fields in a spreadsheet or CRM system.

- A comprehensive article, such as this one.

These two technologies create a virtuous cycle—a productivity flywheel. High-accuracy ASR encourages users to speak more freely, generating a greater volume of raw intellectual output. The power of LLMs to instantly structure that output provides an immediate and tangible reward, reinforcing the voice-first behavior. ASR captures the what (the content), and the LLM handles the how (the format). This synergy is what makes the system so transformative. ASR alone produces a long, messy document. An LLM alone requires a typed prompt. Together, they enable a user to speak a finished document into existence in the exact format required, dramatically lowering the barrier between thought and final product.

Section 3: A New Paradigm: Say What You Want

The convergence of ASR and LLMs is not just an improvement on an old way of working; it represents a fundamental shift in the nature of human-computer interaction (HCI).

3.1 The End of the Command-Based Era

For the past 60 years, our interaction with computers has been governed by the command-based interaction paradigm. From the earliest command-line interfaces to the modern Graphical User Interface (GUI), the user has been in direct control, responsible for telling the computer the specific steps to execute. The user must master the system's "how": click

this button, drag that file, select this menu item. The burden of translation from intent to action falls entirely on the human.

3.2 The Dawn of Intent-Based Outcome Specification

AI ushers in the third major UI paradigm: intent-based outcome specification. In this model, the user simply states their desired outcome—the "what"—and the computer assumes the responsibility of figuring out the "how."

This marks a complete reversal of the locus of control, from the user to the machine. While this paradigm can function with typed text, voice is its most natural and efficient modality. The spoken word is inherently about expressing intent. It is far more fluid to say, "Find my notes from the last three meetings with Alpha Technical Solutions, summarize their key concerns, and draft a reply addressing each point," than it is to manually search for three separate files, read them, synthesize the information, and then type a new document.

This combination of ASR and LLMs is the first technology to truly deliver on the long-held HCI dream of "Do what I mean, not what I say". Previous interfaces could only ever "do what you say" or, more accurately, "do what you click." A modern AI system, however, can understand the intent behind a vague command. It can parse the request, search across multiple platforms (email, documents, meeting transcripts), identify the relevant information, synthesize it, and generate the requested output, all without the user needing to specify the exact location or name of a single file.

This shift requires a mental adjustment from users, who must learn to delegate, and a strategic one from businesses, which must empower their teams with these new capabilities. The most productive employees will no longer be the fastest typists, but those who are most adept at clearly articulating their intent to an AI agent.

Section 4: The Productivity Flywheel: Voice-AI Use Cases Across the Enterprise

The theoretical benefits of this new paradigm are already translating into concrete, measurable value across business functions. The voice-AI flywheel is actively creating efficiencies, improving data quality, and driving revenue.

4.1 Sales & Business Development: The Voice-to-CRM Revolution

Manual data entry into Customer Relationship Management (CRM) systems is a universally despised task, a primary driver of low user adoption, and a major cause of poor data quality that hampers forecasting and strategy. Voice-to-CRM technology directly addresses this pain point. It allows sales representatives to dictate meeting notes, log calls, and update contact records simply by speaking into a mobile app, often while in transit between appointments.

The quantifiable impact is dramatic:

- Time Savings: Sales representatives report saving between 6 and 8 hours per week that was previously spent on manual CRM entry, time that is directly reallocated to high-value activities like prospecting and closing deals.

- Data Quality and Volume: The richness and volume of data captured can increase by 200% to 1000%. Instead of brief, delayed notes, the CRM is populated with detailed, immediate conversational data.

- CRM Adoption: By removing the single greatest point of friction, organizations have seen CRM adoption rates soar to over 90%.

- Return on Investment: According to McKinsey, the adoption of AI in sales processes can increase ROI by 10% to 20%.

This technology does more than just save time; it transforms the CRM from a passive system of record into an active system of intelligence. When a CRM is populated with sparse, manually entered data, it serves as little more than a digital Rolodex. When it is flooded with rich, detailed, conversational data captured via voice, it becomes the raw material for a new layer of AI-driven analysis. Conversation intelligence platforms can then mine this data to identify successful pitch patterns, detect customer sentiment, flag churn risks, and provide data-driven coaching, turning the CRM into an intelligent partner in the sales process.

4.2 Meetings & Collaboration: Automating the Admin Tax

The lifecycle of a typical business meeting is laden with low-value administrative work: scheduling, note-taking, summarizing decisions, and tracking action items. AI meeting assistants automate this "admin tax." These tools can join virtual meetings on platforms like Zoom and Microsoft Teams, provide a real-time transcription with speaker labels (diarization), and, once the meeting concludes, automatically generate a concise summary, highlight key topics, and extract a list of action items with assigned owners.

The impact is significant. Microsoft reports that its Copilot users have saved substantial time, with some reducing time spent in meetings by up to six hours, and one company, Globo, saving two hours per employee per month. Automated scheduling alone can save a professional up to 30% of their weekly scheduling time and improve meeting attendance by 40% through automated reminders.

When every meeting is automatically transcribed, summarized, and stored, an organization begins to build an "ambient knowledge base." Every decision, discussion, and commitment becomes a permanent, searchable, and citable asset. This directly solves the pervasive problem of "corporate amnesia," where valuable context and institutional knowledge are lost the moment a meeting ends or an employee leaves. Tools with features like a "Search Copilot" allow users to ask natural language questions across all past conversations, democratizing access to information and breaking down knowledge silos.

4.3 Content Creation & Internal Communications

The "blank page problem" is a familiar hurdle in drafting any new document. The voice-AI workflow provides a powerful solution. A professional can dictate their thoughts on a subject for ten minutes, and an LLM can instantly structure that raw transcript into a well-organized first draft of a report, proposal, or a series of internal emails. This dramatically accelerates the content creation process. Consulting firm Arthur D. Little found that using AI to sort documents and curate content made presentation preparation 50% faster, while KPMG reduced the time needed to write internal audit reports by 30%.

4.4 High-Value Industry Verticals

The impact of this technology is particularly profound in several key industries:

- Healthcare: Automating the transcription of doctor-patient conversations directly into Electronic Health Records (EHRs) is a powerful antidote to physician burnout, saving clinicians hours of documentation time each day.

- Legal: Transcribing depositions, court proceedings, and client calls creates instantly searchable records, dramatically accelerating the costly and time-consuming process of legal discovery and case preparation.

- Financial Services: Use cases range from secure customer authentication via voice biometrics to streamlined loan processing and the delivery of personalized financial advice through intelligent voice agents.

- Customer Service: AI-powered voice bots handle routine inquiries 24/7, reducing customer wait times and operational costs while capturing every interaction for sentiment analysis and continuous process improvement.

Section 5: Activating the Voice Revolution: A Framework for Implementation

Harnessing the power of voice-AI requires a strategic approach that goes beyond simply procuring new software. A successful implementation focuses on integrating the technology into core workflows to solve specific business problems.

- Step 1: Identify the Friction. The first step is to conduct a workflow audit to identify the most significant bottlenecks caused by manual typing and data entry. Where is the administrative tax highest? Common areas include sales reporting, meeting follow-ups, clinical documentation, and internal report generation.

- Step 2: Pilot with a Champion Group. A company-wide rollout is ill-advised. Instead, begin with a pilot program focused on a small, tech-savvy group that feels the identified pain point most acutely, such as a field sales team or a research department. This gradual rollout, led by internal champions, builds momentum, generates early wins, and provides invaluable lessons for broader implementation.

- Step 3: Choose the Right Tools. The technology ecosystem is complex. A critical decision must be made between adopting off-the-shelf solutions (e.g., a standalone Voice-to-CRM app) and developing custom solutions that integrate deeply with proprietary business systems. This evaluation must consider factors like transcription accuracy, security certifications (e.g., SOC 2, HIPAA), integration capabilities via APIs, and the architecture's ability to scale.

- Step 4: Measure, Iterate, and Scale. Before the pilot begins, define clear, quantifiable success metrics. These could include time spent on data entry, CRM adoption rates, data quality scores, or report generation times. The results of the pilot should be used to build a robust business case for a wider, phased rollout across the organization.

Navigating this implementation process—from identifying the right use case to selecting the appropriate technology and ensuring seamless integration—requires specialized expertise.

Conclusion: Your Business Has a Voice. Its Time to Use It.

The era of the keyboard's unchallenged dominance in the workplace is over. The convergence of hyper-accurate Automatic Speech Recognition and intelligent Large Language Models has created a new, voice-first paradigm for productivity that is demonstrably faster, more natural, and more efficient.

This is not a future trend to monitor; it is a present-day reality. The tools are mature, the ROI is proven, and early adopters are already building a significant competitive advantage. Organizations that remain tethered to the manual, friction-filled workflows of the past risk being outpaced in efficiency and intelligence.

The technology to unlock this new frontier of productivity is no longer on the horizon; it is here. But harnessing its full potential requires a deep understanding of both the AI landscape and your unique business processes. A generic solution may not capture the specific nuances that drive value in your organization.

At Alpha Technical Solutions, we specialize in designing and deploying bespoke, voice-powered solutions that transform workflows and drive measurable results. We go beyond off-the-shelf products to build integrated systems that solve your most pressing productivity challenges.

If you are ready to move your business beyond the keyboard and operate at the speed of thought, contact us for a strategic consultation. Let's discuss how to give your business its voice.

References

- can you speak faster than you type? - Hacker News, https://news.ycombinator.com/item?id=10995835

- Speech calculator: how long does your speech take? – Debatrix International, https://debatrix.com/en/speech-calculator/

- Whatever Happened to Voice Recognition? - Coding Horror, https://blog.codinghorror.com/whatever-happened-to-voice-recognition/

- How Stream of Consciousness Journaling Can Transform Your Mental Health - Jyotirgamya, https://jyotirgamya.org/opinion/stream-of-consciousness-journaling/

- Unlocking Stream of Consciousness Writing - Number Analytics, https://www.numberanalytics.com/blog/ultimate-guide-stream-consciousness-writing

- "Brain Drain" Exercise: How Stream-of-Consciousness Writing Can Help Over-Thinking, https://www.theemotionmachine.com/brain-drain-exercise-how-stream-of-consciousness-writing-can-help-over-thinking/

- Writing as a Thinking Tool - MSU Denver, https://www.msudenver.edu/writing-center/faculty-resources/writing-as-a-thinking-tool/

- The Neuroscience Behind Writing: Handwriting vs. Typing—Who Wins the Battle? - PMC, https://pmc.ncbi.nlm.nih.gov/articles/PMC11943480/

- Voice user interface - Wikipedia, https://en.wikipedia.org/wiki/Voice_user_interface

- www.designstudiouiux.com, https://www.designstudiouiux.com/blog/voice-user-interface-design-guide/#:~:text=The%20birth%20of%20VUIs%20can,i.e.%2C%20the%20Automatic%20Digit%20Recognizer.

- What is Voice User Interface (VUI)? Definition & Examples (2025) - UX design agency, https://www.designstudiouiux.com/blog/voice-user-interface-design-guide/

- The Rise of Voice User Interfaces (VUIs): Transforming Human ..., https://pageoneformula.com/the-rise-of-voice-user-interfaces-vuis/

- The Impact of Speech Recognition Technology on the Workplace - Kardome, https://www.kardome.com/blog-posts/speech-recognition-technology-workplace

- research-collective.com, https://research-collective.com/limitations-of-voice-recognition-technology/#:~:text=Sound%20quality,at%20the%20table%20next%20door.

- What are the Limitations of Speech to Text? - British Legal Technology Forum, https://www.britishlegalitforum.com/news/what-are-the-limitations-of-speech-to-text/

- Advancements in Automatic Speech Recognition (ASR): A Deep ...,2025, https://futurewebai.com/blogs/advancements-in-automatic-speech-recognition

- Tracing the Evolution of Speech Recognition Through Machine Learning - Medium, https://medium.com/@shaziaparween333/tracing-the-evolution-of-speech-recognition-through-machine-learning-8632e92b4f66

- What is Automatic Speech Recognition? A Comprehensive Overview of ASR Technology, https://www.assemblyai.com/blog/what-is-asr

- Automatic Speech Recognition using Advanced Deep Learning Approaches: A survey, https://arxiv.org/html/2403.01255v2

- Top 6 speech to text AI solutions in 2025 - Fingoweb, https://www.fingoweb.com/blog/top-6-speech-to-text-ai-solutions-in-2025/

- What Is WER in Speech-to-Text? Everything You Need to Know (2025) - Vatis Tech, https://vatis.tech/blog/what-is-wer-in-speech-to-text-everything-you-need-to-know-2025

- Technical Performance | The 2025 AI Index Report | Stanford HAI, https://hai.stanford.edu/ai-index/2025-ai-index-report/technical-performance

- A new interface paradigm | NEXT Conference, https://nextconf.eu/2024/01/a-new-interface-paradigm/

- AI Is First New UI Paradigm in 60 Years - UX Tigers, https://www.uxtigers.com/post/ai-new-ui-paradigm

- The Death of User Interfaces - Muzli - Design Inspiration, https://medium.muz.li/the-death-of-user-interfaces-1aa31796f64d

- The Future of AI Voice Technology: Trends & Impact, https://valasys.com/the-future-of-ai-voice-technology/

- Meeting Summaries, Transcripts, AI Notetaker & Enterprise Search ..., 2025, https://www.read.ai/

- Voice to CRM | Data Entry Transcription to CRM Solution - Hey DAN, 2025, https://heydan.ai/what-is-voice-to-crm/

- Voice-Powered CRMs: Hands-Free Sales Automation for Business - Kanhasoft, https://kanhasoft.com/blog/voice-powered-crms-hands-free-sales-automation/

- Voice to CRM: Boosting Productivity & Data Accuracy - Leadbeam, 2025, https://www.leadbeam.ai/blog/what-is-voice-to-crm

- AI Voice Automation: Revenue Generating Use Cases - AgentVoice, 2025, https://www.agentvoice.com/ai-voice-use-cases-the-ultimate-list/

- 10 speech-to-text use cases to inspire your applications - AssemblyAI, 2025, https://www.assemblyai.com/blog/speech-to-text-use-cases

- How real-world businesses are transforming with AI — with 261 new stories - The Official Microsoft Blog, https://blogs.microsoft.com/blog/2025/04/22/https-blogs-microsoft-com-blog-2024-11-12-how-real-world-businesses-are-transforming-with-ai/

- How Voice Assistants for Business Are Improving Efficiency - MoldStud, ,https://moldstud.com/articles/p-voice-assistants-for-business-improving-efficiency

- Use Cases for Speech Data in AI: Applications & Innovations - Waywithwords.net, https://waywithwords.net/resource/exploring-use-cases-speech-data-in-ai/

- Top 11 Voice Recognition Applications & Examples in 2025 - Research AIMultiple, https://research.aimultiple.com/voice-recognition-applications/

- 15 Profitable Voice AI Use Cases for Agencies - VoiceAIWrapper, https://voiceaiwrapper.com/blog/15-profitable-voice-ai-use-cases-for-agencies

- Voice Assistants: Use Cases & Examples for Business [2025] - Master of Code, , https://masterofcode.com/blog/voice-assistants-use-cases-examples-for-business