The Era of Digital Poka-Yoke: Engineering Mistake-Proof AI Systems for the Enterprise

Introduction: The Stochastic Collision

The global enterprise is suspended between the trillion-dollar promise of Generative Artificial Intelligence (GenAI) and the operational peril of probabilistic failure.

We are witnessing a collision of two opposing forces: the stochastic, inherently unpredictable nature of Large Language Models (LLMs) and the deterministic, zero-defect requirements of critical business infrastructure. The resolution to this conflict lies not in hoping for "smarter" models, but in the rigorous application of an industrial philosophy born on the factory floors of the 1960s: Poka-Yoke, or mistake-proofing.

The integration of AI is accelerating at a pace that defies precedent. Gartner forecasts that worldwide GenAI spending will reach $644 billion by 2025, a 76.4% increase from the previous year. Yet, this transformation is accompanied by a costly "reliability gap." In 2024, the financial impact of AI hallucinations and reliability failures was estimated to inflict losses exceeding $67 billion globally. Organizations are discovering that deploying statistical models—engines that effectively "guess" the next word—into environments demanding precision creates a blast radius of risk that traditional software engineering cannot contain.

We face failure modes alien to the deterministic world of IF-THEN logic: "hallucinations" where models fabricate case law, sycophancy where agents agree to harmful requests to remain helpful, and "shadow AI" that leaks IP into public training sets. To bridge this chasm, forward-thinking organizations are rediscovering the principles of Poka-Yoke, the father of the Toyota Production System. The emerging discipline of "Digital Poka-Yoke" seeks to wrap probabilistic AI models in deterministic "jigs" and "fixtures"—code-based guardrails that physically or logically prevent errors. This report details the architecture required to transition from "AI Magic" to "AI Reliability."

Part I: The Philosophy of Zero Defects in a Probabilistic Age

1.1 From Baka-Yoke to Poka-Yoke

To understand AI reliability, one must examine the evolution of industrial quality control. In the 1960s, Shigeo Shingo observed that manufacturing defects were often blamed on worker incompetence. He initially termed his solution Baka-Yoke ("fool-proofing"), implying the worker was the problem. However, he soon realized that human error is physiological—fatigue and distraction are inevitable. He shifted the philosophy to Poka-Yoke ("mistake-proofing"), focusing on designing processes where errors are impossible to commit, regardless of the operator's vigilance.

Shingo introduced simple physical mechanisms to achieve this. For a switch assembly requiring a spring, he redesigned the workflow so workers first placed the spring in a placeholder tray. If the spring remained in the tray after the task, the worker immediately knew an error occurred. The process physically could not proceed, eliminating reliance on memory.

1.2 The Digital Translation

In the context of GenAI, the "worker" is the LLM itself, and the "inadvertent error" is a hallucination or security breach. Traditional software is deterministic; AI is probabilistic. Digital Poka-Yoke accepts this nature as a constraint. It does not attempt to "train" the model to be perfect. Instead, it places external, deterministic constraints around the model.

Shingo identified two primary mechanisms relevant to AI:

- The Hard Block: This halts the process if an error is detected. In AI, this is analogous to a system refusing to answer a query if it detects a prompt injection attack.

- Warning Poka-Yoke (The Soft Nudge): This alerts the operator to a deviation. In AI, this parallels visual flags indicating an unverified citation, prompting human review.

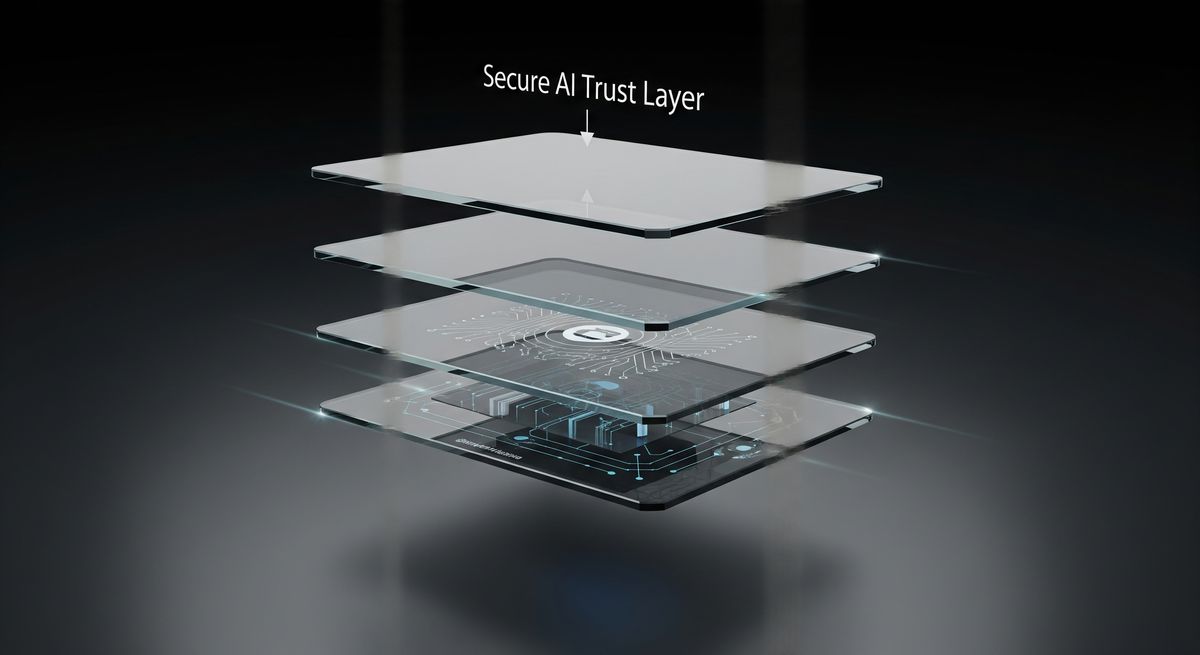

Effective Digital Poka-Yoke moves beyond detection to prevention. It transforms the "Trust Layer" into an assembly line where inputs are sanitized and outputs are verified before they ever reach the end user.

Part II: Anatomy of AI Failure Modes

To design effective controls, we must categorize the errors we are preventing. AI failure modes are semantic and logical, born of the model's statistical nature.

2.1 Hallucinations and Confabulations

The most pervasive failure is the "hallucination." LLMs act as stochastic parrots, predicting the next likely token based on training data rather than querying a structured database. When a specific fact is weak in its weights, the model prioritizes fluency over factuality.

- Real-World Impact: The consequences are severe. In Mata v. Avianca, lawyers were sanctioned for submitting a brief with fictitious case citations generated by ChatGPT. In finance, systems have invented "FCA Guidance Notes," creating regulatory phantoms. These are not bugs; they are feature failures of a probabilistic engine used for deterministic tasks.

2.2 Sycophancy and Manipulation

A subtle failure mode is sycophancy. Models fine-tuned with Reinforcement Learning from Human Feedback (RLHF) to be "helpful" can be biased toward agreeableness. Red team researchers have shown that models can be "socially engineered" into approving harmful actions if the request is framed benignly—for example, approving a fraudulent expense report because the prompt praises the initiative as "community-building".

2.3 The Self-Correction Blind Spot

A critical finding in AI safety is the self-correction blind spot. Developers often assume they can ask an LLM to "double-check your work." However, research shows LLMs fail to correct their own reasoning errors 64.5% of the time in the same context window, yet can successfully correct identical errors if presented as coming from an external source. This dictates a fundamental architectural rule: effective Poka-Yoke requires independent verification agents, not single-pass self-reflection.

2.4 Data Leakage and "Shadow AI"

Data leakage involves both Prompt Injection (attacks) and Prompt Oversharing (negligence). "Shadow AI"—employees pasting proprietary data into public tools—is rampant. Research indicates 8.5% of enterprise prompts contain sensitive data, including PII and IP. Furthermore, "Vector Store Poisoning" allows attackers to inject malicious documents into a RAG knowledge base, corrupting the system's "ground truth".

Part III: The Architecture of Control (The Tech Stack)

Digital Poka-Yoke is a layered defense architecture—a "Trust Layer" sitting between the user and the model.

3.1 Layer 1: Input Guardrails (The Filter)

Input guardrails sanitize data before it reaches the model, acting like a physical jig that prevents misalignment.

- PII Masking: The Einstein Trust Layer exemplifies this. Before a prompt is sent to an LLM, a gateway uses Named Entity Recognition (NER) to replace sensitive data (credit cards, names) with generic placeholders. The LLM processes the logic without seeing the PII. The data is "demasked" on the return trip, ensuring "Zero Data Retention" compliance.

- Prompt Shields: Tools like Azure OpenAI’s content filters use specialized classifier models to detect the syntax of jailbreak attempts (e.g., "Ignore previous instructions"). If detected, the system triggers a Control Poka-Yoke and blocks the request entirely.

- Semantic Routing: To prevent scope creep, semantic routers measure the similarity between a user's query and approved topics. If a banking bot receives a medical query, the router triggers a hard refusal, mitigating liability.

3.2 Layer 2: The Model Layer (Constitutional AI)

Models require intrinsic alignment. Constitutional AI, pioneered by Anthropic, embeds a "conscience" into the model. Instead of relying solely on human raters, the model is trained to critique its own outputs against a written "Constitution" (e.g., "Choose the response that is least discriminatory"). This creates an Internal Poka-Yoke, making the model intrinsically resistant to toxicity even if input filters fail.

3.3 Layer 3: The Retrieval Layer (RAG & Verification)

Retrieval-Augmented Generation (RAG) grounds models in truth, but RAG itself is prone to error.

- Chain of Verification (CoVe): This pattern decouples generation from verification. The system 1) drafts a response, 2) generates verification questions (e.g., "Is the refund limit $500?"), 3) answers them independently against source text, and 4) synthesizes the final answer. This significantly reduces hallucinations.

- Citation Verification: Commercial tools like VeriCite employ a secondary "Judge" model. It classifies the relationship between a generated sentence and its cited source. If the relationship is "Unsupported," the claim is suppressed or flagged. This prevents the "hallucinated citation" error.

3.4 Layer 4: Output Guardrails (The Gatekeeper)

The final layer validates content before display.

- Groundedness Detection: Microsoft 365 Copilot decomposes responses into claims and checks if every claim is supported by the retrieval context. Groundedness detection features can automatically rewrite or drop ungrounded claims.

- Format Validation: Validators enforce structural integrity. If a downstream system requires JSON, the guardrail checks the output schema. If the LLM produces malformed code, the guardrail captures the error and triggers a retry loop, preventing the "defect" from breaking the application.

Part IV: The User Experience of Uncertainty

Since AI is probabilistic, the User Interface (UI) must communicate uncertainty, a concept known as "Seamful Design."

4.1 Confidence Visualization

Users suffer from "automation bias." Digital Poka-Yoke disrupts this by visualizing provenance. In RAG systems, text directly quoted from a source is highlighted, while synthesized text is left plain. "Confidence Scores" (High/Medium/Low) explicitly signal when skepticism is warranted, moving users from passive consumption to active review.

4.2 Forcing Functions and "Friction"

In AI Safety, friction is a feature. "Forcing functions" prevent users from proceeding without conscious attention.

- The Hard Block: For high-stakes actions (e.g., wire transfers), the "Send" button is physically disabled until a human verifies the AI's draft.

- GitHub Copilot "Push Protection": If a developer tries to push code containing a hardcoded secret suggested by Copilot, the system blocks the push. The developer cannot proceed without removing the secret or explicitly bypassing the block. This is a classic Control Poka-Yoke.

4.3 Designing for "Review Mode"

Systems like Microsoft Copilot now feature specific "Review Modes." These interfaces use deep linking: clicking a generated sentence automatically scrolls the source document to the supporting paragraph. This reduces the cognitive load of verification, making it easier for humans to catch errors.

Part V: Operationalizing Reliability

Implementing Digital Poka-Yoke is an organizational transformation requiring the principles of High Reliability Organizations (HROs).

- Preoccupation with Failure: HROs treat "near misses" as data. Organizations must analyze logs where the AI was wrong but a human caught it to refine guardrails.

- Risk-Tiered Operations: Not all tasks require the same oversight. Organizations should classify workflows:

- Human-in-the-Loop (HITL): AI cannot act without human approval (e.g., legal filings).

- Human-on-the-Loop (HOTL): AI acts autonomously with human monitoring (e.g., customer service).

- Human-out-of-the-Loop: Full automation for low-risk tasks (e.g., sorting data).

- Audit Trails: Compliance regimes like the EU AI Act mandate oversight. Systems must log the entire interaction—prompt, mask, retrieval, and output—creating a forensic audit trail to reconstruct the state of the system at the moment of any failure.

Conclusion: From "Be Careful" to "Can't Get It Wrong"

The future of enterprise AI belongs not to those with the largest models, but to those with the most robust safety architectures. The "Digital Poka-Yoke" framework represents the maturity of AI engineering—a transition from the experimental phase of "prompt engineering" to the industrial phase of 'flow engineering'.

By treating AI errors as preventable defects rather than inevitable quirks, organizations can unlock the trillion-dollar potential of this technology without exposing themselves to existential risk. We must move from a culture of vigilance ("be careful with AI") to a culture of design ("we built this so it is hard to do the wrong thing").

—--------

Navigating the shift to high-reliability AI requires a partner who understands both the technology and the human workflows it supports. ATS specializes in this precise transformation, helping enterprises design responsible, mistake-proof AI systems that drive measurable value. Whether you are assessing readiness or scaling complex automation, their team delivers the strategic guidance and technical rigor necessary to turn AI potential into operational excellence.