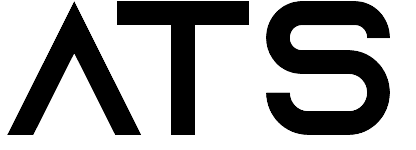

The global manufacturing landscape of 2026 is defined by a profound cognitive dissonance between what machines are capable of signaling and what organizations are permitted to execute. For nearly a century, the industrial sector has operated under the shadow of the unplanned downtime specter—a phenomenon that currently costs the average Fortune 500 company approximately $2.8 billion annually, representing roughly 11% of total revenue. In response to this existential threat, the Industry 4.0 revolution promised a shift from reactive to proactive maintenance, powered by artificial intelligence (AI) and the Industrial Internet of Things (IIoT). By early 2025, the technical promise had been realized: advanced deep learning models, specifically Convolutional Neural Networks (CNNs) and Long Short-Term Memory (LSTM) architectures, achieved near-total accuracy in detecting precursors to mechanical failure, such as spindle bearing wear, months before a functional breakdown occurs.

However, the "Predictive Paradox" remains: despite 99% to 100% model accuracy in high-stakes environments, many facilities continue to default to rigid, calendar-based maintenance schedules. This persistence reveals a fundamental truth about industrial digital transformation—the end of scheduled maintenance is not a data problem; it is a governance problem. The industry currently faces an AI leadership paradox where investment is high, but true integration remains elusive. CNC uptime is maximized only when organizational policy permits AI to override the traditional production calendar, yet the Model-First mindset remains fixated on sensor density while ignoring the "Policy-First" transformation required to realign organizational rules.

I. The False Promise of the "Smarter" Model

The industrial sector’s fixation on the "Model-First" approach is a legacy of early 2010s digital strategy, where the primary challenge was perceived as a lack of visibility. While building the data foundation for AI is essential, it is often not enough to change outcomes on its own. This perspective led to massive investments in sensor-rich environments; by 2026, roughly 80% of manufacturers plan to dedicate significant budgets to analytics, IoT sensors, and cloud/edge technologies.

The Predictive Paradox: Accuracy vs. Action

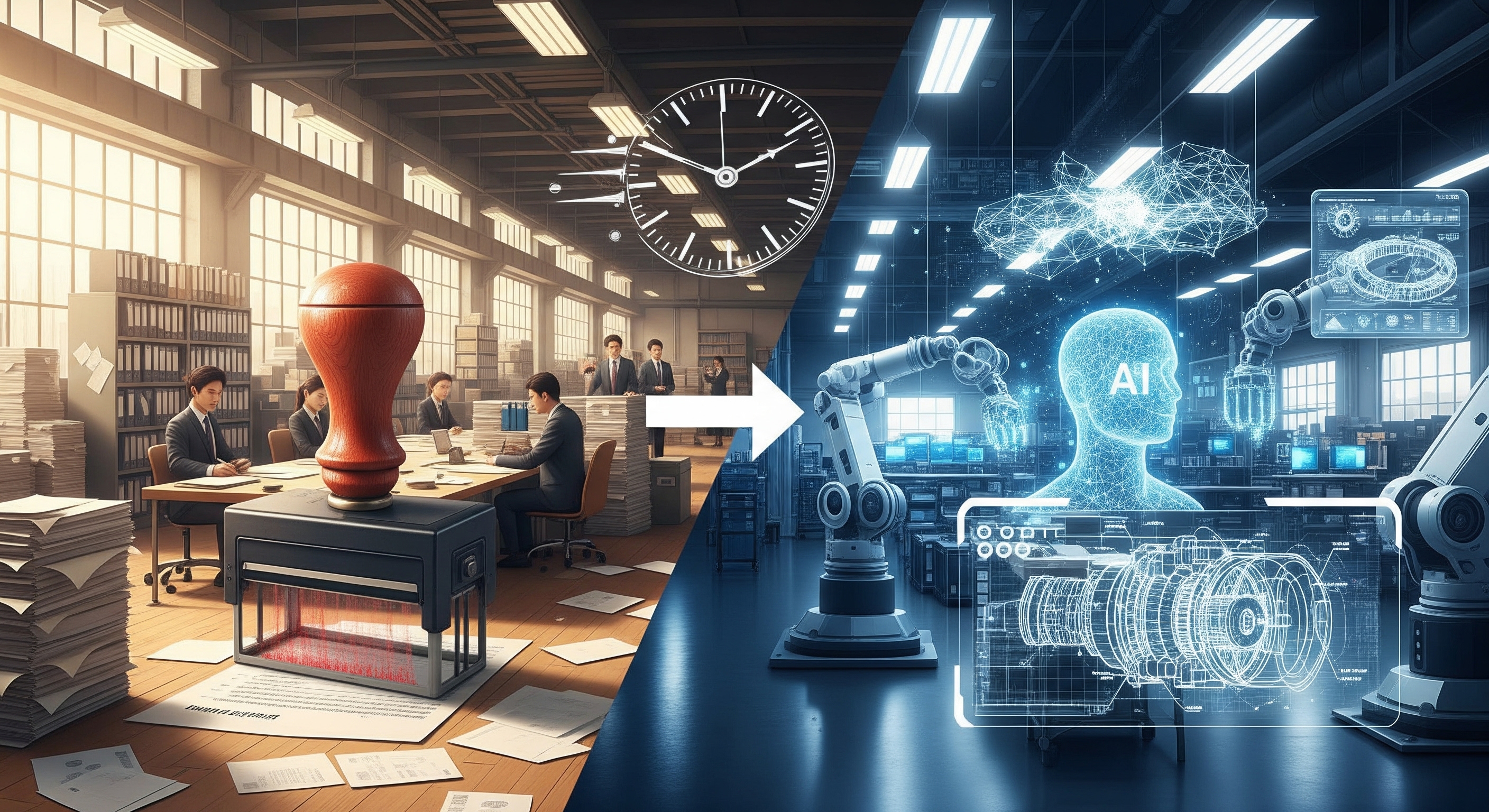

The state of the art in 2026 is staggering. Machine learning algorithms can now predict equipment failures 6 to 12 months ahead with accuracy rates exceeding 85%, while IoT-powered systems properly implemented can exceed 90%. In the specific case of spindle bearings—the high-speed heart of CNC machining—the precision is absolute. Research utilizing Continuous Wavelet Transform (CWT)-based scalograms fed into CNN models has demonstrated 100% classification accuracy for bearing faults under varying load conditions.

The Predictive Paradox lies in the fact that this high accuracy has not translated into a proportional abandonment of time-based preventive maintenance (PM). The "Model-First" approach fails because it treats the AI as an advisor rather than an authorized actor; true transformation requires moving from reactive to intelligent machine health. When an AI agent signals that a spindle bearing has 400 hours of remaining useful life (RUL), but the maintenance calendar mandates a replacement today, the human hierarchy almost always defaults to the calendar.

The Spindle Bearing Case Study: Mathematical Foundations of Failure

The ability of AI to detect wear is rooted in the physics of rotating components. Bearing defects generate oscillations in specific frequency bands, such as the Ball Pass Frequency of the Outer race (BPFO) or the Ball Pass Frequency of the Inner race (BPFI). These frequencies are calculated as follows:

Where n is the number of rolling elements, f_r is the relative speed, d is the ball diameter, D is the pitch diameter, and alpha is the contact angle. While traditional vibration analysis requires a human expert to identify these peaks, AI models process raw sensor data to identify subtle shifts in the "Potential Failure" (P) to "Functional Failure" (F) curve. Despite the mathematical rigidity of these signals, organizations struggle to trust the AI's "Early Warning" because the physical symptoms (heat, noise) are not yet apparent.

II. From Rigid Calendars to Dynamic Trust: Breaking the "Safety" Policy

The persistence of the maintenance calendar is not merely a matter of habit; it is a manifestation of "Industry 3.0" governance—a defensive crouch designed to mitigate the risks of a world where data was scarce. In that era, the "safety" policy was the fixed schedule.

The Ghost of Industry 3.0: Defensive Maintenance

Scheduled maintenance is often a policy-driven reaction to the extreme costs of reactive failure. Emergency repairs cost 3-5 times more than planned activities. However, the Industry 3.0 model leads to "over-maintenance," where parts are replaced based on time despite having significant RUL. Studies suggest that 30% of preventive maintenance is too frequent. Second is silent failure, where a machine fails between scheduled intervals because its actual usage exceeded the "average" assumptions of the calendar.

The Permission Gap: Who Owns the Decision?

The most significant barrier to AI transformation is the "Permission Gap." This is the structural reality where shop floor managers lack the authority to cancel a scheduled "PM" even when AI diagnostics show 100% component health. In 2026, although 65% of maintenance teams plan to use AI, less than 32% have the governance frameworks to allow that AI to actually change the schedule. This reflects the broader difficulty of scaling AI in operations.

This gap is exacerbated by the lack of ModelOps—the operational framework enabling organizations to implement responsible AI that meets transparency and accountability requirements. Without a clear "Designated Approving Authority" (DAA) for AI-driven decisions, the path of least resistance is to follow the legacy calendar.

Policy Shift 1: Implementing "Condition-Based Authority"

To transition from Industry 3.0 to 4.0, organizations must implement a condition-based maintenance policy. This policy shifts the burden of proof from the maintenance team (proving a machine is healthy enough to skip service) to the schedule (proving why it needs to stop). Under this framework, maintenance is performed only when there is objective evidence of a developing fault. By using PLC data to track actual usage, facilities stop over-maintaining idle machines and under-maintaining busy ones.

III. Rewiring the Shop Floor: Incentives Over Insights

The failure of AI initiatives often begins in the boardroom, where Key Performance Indicators (KPIs) are defined. Traditional metrics often penalize the very behaviors that AI-driven maintenance seeks to encourage.

The Misalignment of KPIs: The OEE Trap

OEE (Overall Equipment Effectiveness) is the industry standard for measuring productivity, but its traditional calculation can act as a poison pill for AI adoption. In most legacy environments, "Availability" is measured against "Scheduled Production Time." If an AI model detects a performance degradation and recommends a mid-shift intervention, the plant manager is penalized because the intervention is recorded as "Unplanned Downtime."

Managers are thus incentivized to hit a weekly production quota rather than maximizing the Asset Life-Cycle Value (ALV). This misalignment leads to machines being pushed to the point of catastrophic failure, which destroys the long-term value of the asset for the sake of a short-term metric.

The Hidden "Process Debt"

Beyond KPIs, factories are weighed down by "Process Debt"—the accumulation of legacy policies like fixed batch sizes and rigid shift handovers that make AI-driven maintenance physically impossible to execute.

- Legacy Batch-Processing: Systems designed decades ago store data in silos, making real-time AI inference impossible.

- Technical Debt: Maintaining outdated systems consumes 60-80% of IT budgets, and accumulated tech debt effectively chokes the infrastructure required to scale AI.

Policy Shift 2: Reconstructing Incentive Frameworks

To rewire the shop floor, organizations must move from "Up-Time per Shift" to "Asset Life-Cycle Value." This involves rewarding teams for avoiding catastrophic failures rather than just hitting a weekly production quota. A "Self-Correcting Factory" uses "Action-Literate" AI agents that respect the plant's real constraints—inventory, lead times, and safety—to deliver ready-to-run plans rather than just warning lights.

IV. The Human-in-the-Loop: Upskilling as a Structural Policy

The most sophisticated AI model will fail if the maintenance technician does not trust it. This Explainability Gap is a primary reason why AI alerts are frequently ignored. Solving this trust barrier often requires engineering mistake-proof AI systems that guide workers rather than confusing them.

Democratizing Data Access: Breaking Silos

Transformation requires moving away from "Data Silos." In 2026, winning teams use platforms like iMaintain to create a living knowledge base where every repair and tweak is recorded and shared. This democratized access allows technicians to see the why behind the AI’s alert.

Policy Shift 3: Mandating Cross-Functional Upskilling

Upskilling must be treated as a structural policy, not an elective. By 2026, AI fluency is becoming a core requirement for maintenance staff, reflecting the critical shifts detailed in our analysis of the AI talent landscape 2025. They must evolve from "wrench-turners" to "AI Interpreters" who can validate AI-generated inspection results.

Case Study: The Taichung "Golden Valley" In Taichung, Taiwan, the Center of Excellence on Smart Manufacturing is already implementing these programs. By creating a "Platform Ecosystem," manufacturers utilize "AI Agents" to shorten design cycles and improve quality. This regional hub model demonstrates that AI literacy drives massive economic benefits.

V. Conclusion: Building the "Self-Correcting" Factory

The future of industrial maintenance is not one of more data, but of better governance. Uptime is a result of how an organization reacts to data. In 2026, the real value will accrue to the "Early Adopters" who move from pilot to production by redefining their governance.

The Competitive Moat: Bravery Over Technology

The global predictive maintenance market is expected to grow significantly by 2030. However, leaders will treat maintenance as a strategic lever for operational excellence rather than a cost center.

Final Call to Action: Auditing Your "Unspoken Rules"

- Identify the Permission Gap: Who has the authority to stop a line based on a probability of failure?

- Expose the KPI Conflict: Does your OEE score penalize your maintenance lead for preventing a disaster?

- Map the Process Debt: Which legacy policies are preventing your AI from actually saving your machines?

The economic impact of achieving 100% uptime is transformative. The technology is here; the question is whether your governance policy will allow you to use it.

—--

The shift toward a self-correcting factory represents more than a technological upgrade; it is a fundamental realignment of industrial governance. Achieving this requires not just the deployment of sophisticated models, but the dismantling of legacy policies that restrict agility. For organizations seeking to accelerate this transformation and integrate cutting-edge defect detection, predictive analytics, and automated decision-making into their workflows,

ATS provides the specialized expertise necessary to turn the promise of industrial AI into a measurable, competitive advantage.